Search the Community

Showing results for tags 'ssd'.

-

Hello together, I just optimized my Drivepool a bit and set a 128 GB SSD as write cache. I had another SSD with 1GB I had left I put into the pool and set drivepool to write only folders on it which I use most and should be fast available. This folders have also a duplication (the other Archive drives are HDDs). I'm not sure if this is working or the SSD is useless for more speed. How is drivepool working: - does drivepool read both the original und the duplicated folders if I use them from the Client? - will drive pool recognize which drive is faster and read from this first? best regards and thanks for your answers

- 23 replies

-

- duplication

- ssd

-

(and 2 more)

Tagged with:

-

(I am sure I saw the answer to this but I can't find it, so apologies if this is answered already) Hi there, I have a pool with 4x2TB spinning disks and 2x1TB SSDs, with about 3TB free. The pool is only for large (legal!) media files and is now pretty much read-only. Should there be any new writes I would like them to land on the SSDs first but I _don't_ want the SSDs to be completely evacuated. Instead, I want to keep a certain % of the SSDs free, but the rest of the space should be considered fine for long term file storage. Using the SSD plugin gives me the "write first to SSD" but it then tries to completely clear the SSDs. To put it another way, I want all disks used for file storage, but I want to keep X% of the SSDs free as a write cache. Is this possible ATM? Thanks!

-

Hi, I know it's been asked before but I'm still wondering if we can expect NVMe "smart" support in the future for these drives. In Direct I/O everything is a red cross except the "SSD" part with every option, combination of options and specific methods. The devices in question are listed as "Disk # - NVMe Samsung SSD 960 SCSI Disk Device" Using Scanner version 2.5.4.3216 and Samsung NVMe Controller 3.0.0.1802 driver. CrystalDiskInfo and some other software can get the data.

-

I run windows server 2016 with 10 hdds and 4 small SSDs - 15TB total usable DP pool with 2x3TB hdds parity using snapraid. The really stupid thing is, I’m only sitting on 2tb of data. I plan on repurposing the drives into an old Synology NAS and keep it as an off-site backup. So, I’m just wandering if anyone has done the following and can give me advice: Create 2x Raid 0 between 2x 1TB SSDs = 4TB in total between 4 drives using stablebit drivepool (will only be mirroring a couple of directories) and then have 2x 3TB parity drives with snapraid. Goals: 1. Reduce idle watts from spinning HDDs 2. Saturate 10GbE link (hopefully Raid 0 SSDs can do this) - with a goal of being able to edit 4K off it 3. Protect against bitrot with snapraid daily scrubs Data redundancy is not my main issue as I have a number of backups + cloud + offsite. One advantage I can see is that I can grown this NAS by adding 2 SSDs in RAID 0 at a time

-

ok so I now have a 10gb network and I can saturate the setup I have... I had a pool with 3x 2tb and 2x 4tb - sata drives. All WD Blacks... I added an ssd cache and installed the optimizer and I guess it works for copying files TO the pool. However I do a lot of retrievals and just ordered 2x 6-2.5 hot swap bays. first setup I bought didn't like and returning.. second one will be here in 4 days... so I have some time. new pool will be 12x 500gb crucial ssd - Pool 1 (6tb) 2x 4tb and 2x 2tb (for now) wd black and 500gb ssd optimizer - pool 2 (12tb) my thinking is... I don't retrieve much but when I do, I want it fast... so keep software and iso here and a robocopy job to copy over to pool 2 so it can replicate. does this sound overthought? I have a server 2016 with essentials role that I am using right now with ssd optimizer and mess of wd black drives and has been great. just setup a new box running server 2019 ( essentials role is gone and that sucks) / stablebit drive pool / 2x hp h220 sas controllers (to handle the 12x 2.5 drives) and room for growth. thoughts?

-

How long are damage errors present in launchpads warning display?

HomeServerAdmin posted a question in General

Hi Guys, this week i got my first damage error via launchpad notification from my system SSD (Kingston 60GB). There were not much free space on it since months, so i was planning to swap it with a bigger one (WD 240GB) in any case. After file scan there was only one file damaged, which was not important, so i deleted it, run chkdsk /f on it and clone the whole partitions to a newer bigger SSD. Actually everything is working fine but the damage message is still present in launchpad's notification window and the old kingston drive is unplugged lying in the cubboard. I know from other launchpad messages that there are some error mesages which will not be reset immediatelly and are shown for days after the reason is already solved/gone. How long are damage error(s) present in launchpads warning display/window? Version-Info: Scanner v2.5.3.3191 -

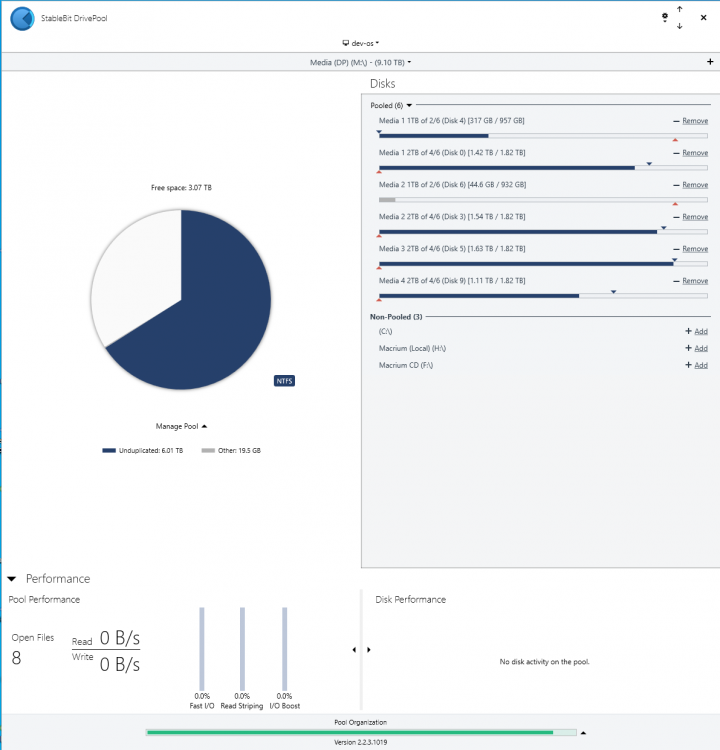

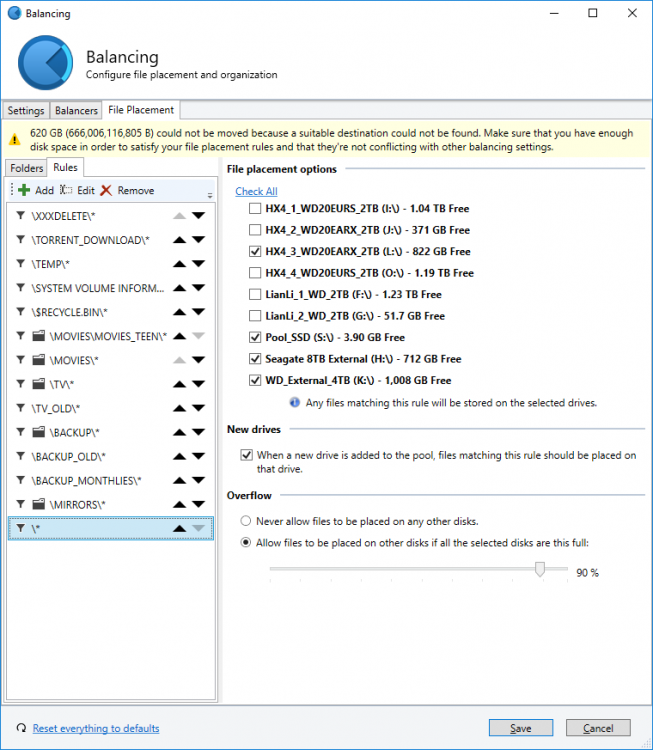

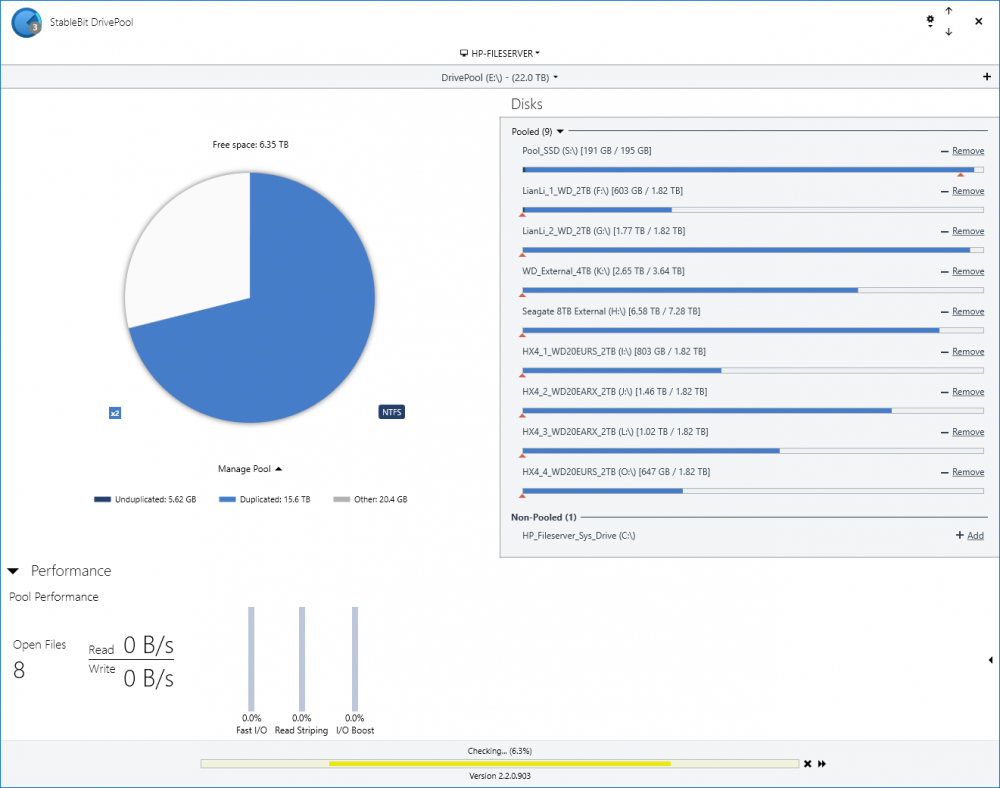

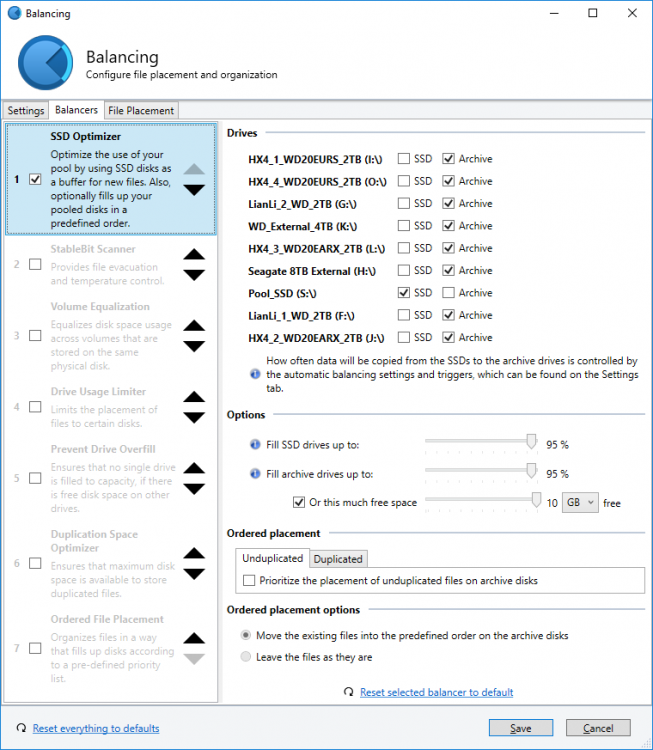

I have DP setup with 8 HDDs and one SSD. The SSD is configured to be the first location for all files and seems to work as such. The issue I am seeing is that as part of the balancing, it competely fills the SSD, and then offloads them them onto one of the drives. One day I will check it and the SSD will be full, the next it will be empty. This has been going on for weeks (months?), and seems very inefficient (in terms of power and wear). Just to be sure I looked at the content of the SSD (S:\) and the files are old ones, not any that would have been newly copied to the SSD (which should get less than 1GB of new files in a day). Possibly it is related to anther error I often see: x GB could not be moved because a suitable destination could not be found. Maje sure you have enough disk space in order to satisfy your file placement rules and they're not conflicting with other balancing settings. I'm not sure why I am getting this as: - I only have one balancing rule "SSD Optimizer" - All File placement rules are set to "Allow files to be placed on other disks..." Thanks for any advice.

-

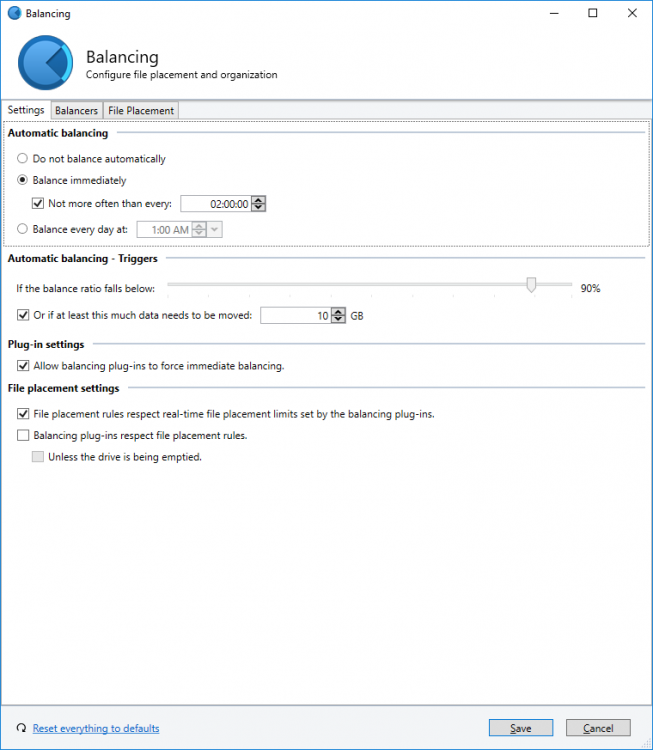

I'm not sure if this belongs in General or Nuts and Bolts but here goes. Basic scenario is I have 4 "drives." 1.) Operating system SSD (not part of the pool.) 2.) SSD landing zone (SSD-optimizer places all new files here for speed.) 3.) HDD 'scratch disk' (folder placement rules place my "temp folder here" when the SSD is emptied in order to hide it from SnapRAID) 4.) Archival drives (folder placement rules migrate my "archival" folders here from either the SSD or scratch disk. This is what SnapRAID sees.) The issue I'm having is that when balancing occurs, files / folders that are being moved from #3 to #4 are being copied BACK to the #2 first. This creates two issues. 1.) #3 and #4 are equally slow, so copying the files to #2 first means balancing takes twice as long as if they were copied from #3 to #4 directly. 2.) The volume of files being moved from #3 to #4 often exceeds the capacity of #2, so balancing has to be run multiple times for everything to migrate successfully. I'm not sure if there's something amiss with my placement / balancing rules, this is the intended behavior, or if this is just a scenario that's never come up. - I have 'file placement rules respect real-time file placement limits' unchecked. - I have 'balancing plug-ins respect file placement rules' checked. - I have 'unless drive being emptied' unchecked. Let me know if I need to clarify anything, I've been playing with it for so long I'm having trouble thinking straight. Thanks in advance.

-

I was wondering if this is a blip or not, the drives are installed in my server 2012 r2 essentials and have never given me any issues , I am not 100% sure but i am guessing it is to do with the samsung magician software as i had previously had rapid mode enabled. however it appears to be glitchy with the new version on this system. I am guessing that this would explain the 26% . Or should i actually be worried at this point in time? Server motherboard is supermicro X10sat Chipset Intel® C226 Express PCH SATA SATA3 via PCH w/ RAID 0, 1, 5, 10 SATA3 via ASM1061® Network Controllers Intel® i217LM + Intel® i210AT Controllers Supports 10BASE-T, 100BASE-TX, and 1000BASE-T, RJ45 output Audio RealTek ALC1150 High Definition Audio Input / Output Serial ATA 6x SATA3 (6Gbps) ports via PCH 2x SATA3 (6Gbps) ports via ASM1061® Total of 8x SATA3 ports (6Gbps) The ssd is plugged into the channel 0 of the PCH controller, all chipset drivers are current CPU is the core I1 4770s 16 gig of ram (none EEC) corsair If you need any other info please let me know Other tools , perfect disk server's smart reporting says all is fine and samsung's magicians tools report all is well too.

-

I'm using Stablebit Drivepool 64 (non-beta) on Win 64 with the SSD optimizer. (Great product by the way!) I've got a single SSD for the optimizer and 5 archive drives. I'm running into an issue where files copied into the pool are properly being written to the SSD but they are marked as grey "Other" files. (Note - these files are copied into the pool, not directly onto the SSD.) Because these are other files, the automatic balance doesn't trigger and they just sit on the SSD. A manual remeasure will properly turns these grey "Other" files on the SSD into light blue unduplicated files and properly triggers the balancer. Any help on why this is occurring, and how I can have the files copied into the pool properly marked as light blue unduplicated files rather than "Other" files? Thanks in advance!

-

My original plans for a new disk layout involved using tiered storage spaces to get both read and write acceleration out of a pair of SSDs against a larger rust-based store (a la my ZFS fileserver). This plan was scuppered when I discovered that Win8 Does Not Do tiered storage and I'd need to run a pair of Server 2012R2 instances on my dual-boot development and gaming machine instead. I'm currently using DrivePool with the SSD Optimiser plugin to great effect and enjoying the 500MB/sec burst write speeds to a 3TB mirrored pool... but although read striping on rust is nice it doesn't yield the same benefits it would against silicon. Would it be possible to extend the SSD Optimiser plugin to move frequently- or recently-accessed files to the SSDs? It would in fact be sufficient to merely copy them, treating that copy as 'disposable' and not counting towards the duplication requirements. Possibly an easier approach might be to partition the SSDs as write cache and read cache, retaining mirroring for the write cache 'landing pad' and treating the read cache as a sort of RAID0? Based on my understanding of how DP works, this might mean that the read cache needs to be opaque to NTFS, but that's certainly a reasonable tradeoff in my use case (I was expecting to sacrifice the entire storage capacity of the SSDs). I know it's possible to select files to keep on certain disks, which is a very nice feature and one I wish eg. ZFS presented (in a 'yes, I really do know what I want to actually keep in the L2ARC, TYVM' sort of way) but an auto system for reads would be good too. Bonus points for allowing different systems on a multiboot machine to maintain independent persistent read caches, although I suspect checking and invalidating the cache at boot time might be a little expensive... Looking at the plugin API docs, implementing a non-file-duplicate-related read cache does not appear to be currently possible. It seems to be a task entirely outside the balancer's remit, in fact. Is this something considered for the future?

-

I'd like to pool 1 SSD and 1 HDD and with pool duplication [a] and have the files written & read from the SSD and then balanced to the HDD with the files remaining on the SSD (for the purpose of [a] and ). The SDD Optimizer balancing plug-in seems to require the files be removed from the SSD, which seems to prevent [a] and (given only 1 HDD). Can the above be accomplished using DrivePool? (without 3rd party tools) Thanks, Tom

- 1 reply

-

- SSD

- Performance

-

(and 4 more)

Tagged with:

-

I set aside 2 SSDs in a RAID0 configuration for my 80TB pool. I moved the SSD Balancer to the top. My past experience and understanding is that it acts as the landing zone for new files but once the activity stops (i.e. over night), it should move those files to the archive drives. My concern is that the RAID0 is not safe and I don't want to lose files located on it when it breaks to find out that they didn't move to the archives. Any thoughts?

-

I have an 80GB SSD and 8 1.5TB drives in my pool. The SSD is also my OS drive so I want to just use it for initial writes, but I expect DrivePool to balance the pool out by eventually moving the files to two of the spinners. I only have about 20GB free on the SSD normally which puts it right at the 72% limit that I have on the drive for new file placement. The problem is that a few older files have one of their copies sitting on the SSD taking up space so DP thinks that there is no room to put new files on the SSD. The other drives are all set to 0% for new file placement, but when I move new files to the pool I can see that only the big drives are active. How do I flush all files off of the SSD to make room for new files without removing and re-adding?

-

As the Boot drive of my WHS2011 server is slowly dying, I want to replace it by an SSD to gain some speed. Is it possible to use the remaining part of the SSD (all the space excluding the C:) as a feeder disk? (e.g. just the D: partition)

-

Hi all, I've been trying out the Archive Optimizer on my Drive Pool installation. I find that after a couple of hours the Feeder disk (SSD) becomes full. The pool remains in a 100% healthy state even though the bar graph beside the Feeder disk indicates that it has exceeded the limit. I guess the first thing is understanding what I expected the Archive Optimizer to do and if this actually aligns with its implementation. I would have expected that with Balancing settings configured to allow for immediate balancing, any data on the SSD Feeder disk would be scheduled for archive to the other disks in the pool. Furthermore, whilst unduplicated or duplicated data remains on the Feeder, the pool's health would be less than 100%. My pool's health remains at 100%. I do have some extra data on the drive outside of the Pool - Windows indexing database etc. I've tried a remeasure and trace logging. The only log entry is: "[Rebalance] Cannot balance. Requested critical only." Am I expecting this to do something that's not actually intended? Any configuration settings changes anyone can recommend that they find works well? Cheers, Salty. Edit: Running version 2.0.0.345 Beta, Server 2012.