Philmatic

Members-

Posts

26 -

Joined

-

Last visited

-

Days Won

1

Everything posted by Philmatic

-

I am in the process of migrating some drives from one server with a DrivePool pool to another server with ZFS. The current drive layout is 4x 10TB, 4x 8TB and 24x 3TB. I needed the 4 10's to be pulled out so I can move them over to the other server. I didn't have enough free space to remove 40TB straight up, so I had to disable duplication. My first grumble: If I'm removing drives, and I don't have enough space. I have to disable duplication first, which fre's up the space but does it in a indiscriminate way, removing some duplicated files from the drives I want to keep and keeping some on the drives I plan to remove. So when I remove the drives, it has to move them from the drive I'm removing to drives I'm keeping. I understand this is how it is supposed to work, but I am suggesting an enhancement. If this step could be combined somehow, then it would be smart enough to disable duplication, but remove the duplicates from the drives I'm removing. Then removing the drives should be instantaneous. My second grumble, somewhat related to the first: Removing drives in batches isn't smart enough to realize that there are other drives in the removal queue, so when I am removing drives, it moves data from the drive I am removing, to the drive that's queued up to be removed next. Doubling and tripling the movement of files. If the remove process could be a tad big more intelligent, it should know not to move files to drives that are already in the removal queue. Other than that, I've been a loyal customer since the early days (1/2012). Love the product, and the simplicity of the product. I wish it did more, but I'm not complaining (Except my two complaints above of course! :))

-

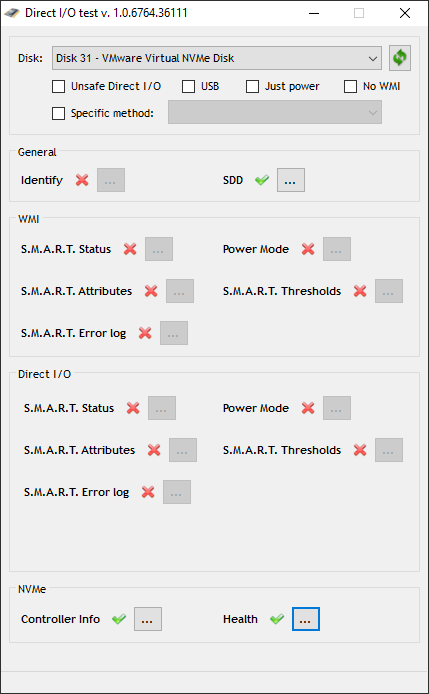

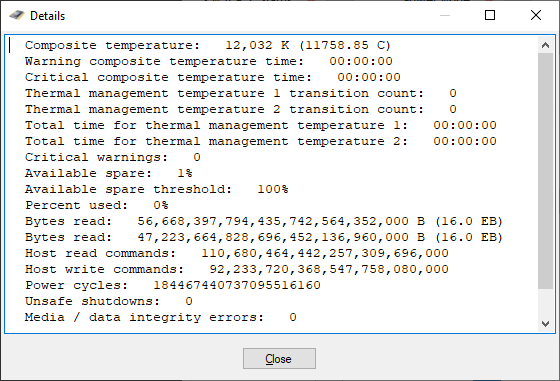

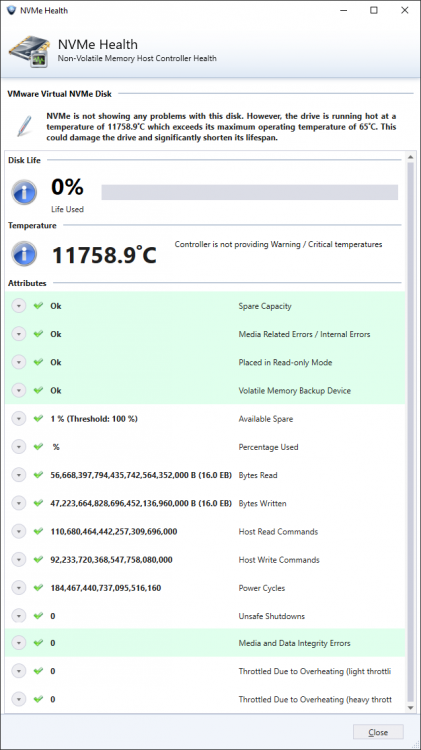

I'm running a Windows Server 2019 VM on VMware ESXi, 6.7.0. The host is a dual proc E5-2670 v1 whitebox. The VMWare datastore is an NVMe disk (HP EX920 512GB) and the guest VM is using the VMWare Virtual NVME controller device driver. The smart data coming from the virtual NVMe driver is... interesting. I'm not expecting it to work, I just figured Alex may want some denug data so it can be fixed. Attached are my test details.

-

Are you asking if DrivePool is compatible on a Hyper-V guest running on a Hyper-V host? If so, yes. I run it this way and it works fine, you just have to pass each disk individually and the guest OS (With DrivePool) sees it just fine. In fact, I went form a full physical setup on Server 2012 and Virtualized Server 2019 on Hyper-V, passed all the disks (Was a huge pain, not being able to pass thru controllers is a huge PITA, VMWare FTW here) and DrivePool picked up the entire pool (32 disks) within a minute or so and that was it. Or are you asking if DrivePool is compatible with the Hyper-V Hypervisor itself (Either in Hyper-V Server or Server Core with the Hyper-V role) as in you want to pool drives inside the Hypervisor and present them as large disks to guests? I've tried this, and it DOES work, but it's not a good idea. DrivePool is not a traditional RAID technology can isn't well suited for large random writes, it's just not recommended. It's best to pass the disk individually and let DrivePool on the guest pool everything together.

-

With Windows Server 2016 RTM right around the corner, I am re-evaluating my server software and looking to upgrade whenever possible. Do the improvements in DrivePool to support Windows 10 extend automatically to Windows Server 2016? I would imagine they would considering the shared kernel, but I just wanted to make sure. Has this been tested?

-

I'm over here sitting on 32.7TB like I'm king of the world, lol.

-

Yep, it really is magical.

-

I wouldn't recommend Alex spend any time fixing this as even Microsoft have given up on the Windows 8/8.1 way of syncing OneDrive. Windows 10 will use a separate executable that syncs directories, like the client for Windows 7 did, versus use the junction points and symbolic links that Windows 8/8.1 uses. As a user, I MUCH prefer the Windows 8/8.1 way of doing it, but I guess there are issues with it under the hood.

-

The defragger built into Windows Server 2012 can defrag multiple disks at the same time, just select them all like you would select multiple files and hit Optimize. Worked fine for my 24 drive server, it did all 24 at the same time.

-

Yes, totally. I have a 150mbps downstream connection running 50 or so torrents at a time and DP has absolutely no issues with it. I do two things though, to be safe: I disable duplication on the folder I download to, it's just not needed I increase the cache on uTorrent and tell it to use up to 1GB of my System RAM for write caches. I have never seen "Disk Overloaded".

-

Yep, I did exactly this. You don't even need to pull the drives, but I do just to be safe. Make sure you don't forget to release the license on the old machine before formatting. Otherwise you will have to open a ticket with support so they can release it on their end.

-

Steps #1, #2, and #3 will work just fine. It's that simple. Drivepool with recognize that your folders are duplicated and will already know what to do.

-

Upgrading (?) Windows 7 > 8.1, how to handle existing pool?

Philmatic replied to montejw360's question in General

That's all you need to do, it'll work exactly like that. -

I've always done a "Reinitialize disk surface" using Hard Disk Sentinel and it has never failed me, it always forces the hard drive to reallocate the bad sectors. It's not free, but well worth the cost. I expect any software that can write zeros to the entire drive (Low level format) would work too, I have never been able to get chkdsk to work to fix the sectors.

-

.copytemp files are the temporary files DrivePool creates as it's copying the file. It will disappear when the file has completed duplication.

-

Doing it in reverse order gets you what you want, mark the bad blocks unchecked, then mark the unchecked blocks as good. The entire drive is then considered good. You should really rescan the drives though, if your wipe did not reallocate the bad sectors then you are still in danger of losing data.

-

Well, if Task Manager isn't showing DrivePool taking up that amount of memory, then it's not. Using the latest beta, I'm seeing 33.9MB of memory usage for the UI application and 35.2MB for the service. That's it, it is a ridiculously small amount for an application to take in 2014. Not to mention the UI is not running all the time. Overall my server is using 2.2GB out of 8GB, this is Server 2012 R2 Essentials. I would look into the Resource Monitor to get a better idea of what exactly is going on.

-

Yep, although I currently have it setup for non-transparent/non-caching proxy, it has full blown Squid installed and supports SquidGuard rules. It's a two command process: set service webproxy listen-address 10.10.0.1 disable-transparent set service webproxy url-filtering squidguard block-category porn save commit http://community.ubnt.com/t5/EdgeMAX-CLI-Basics-Knowledge/EdgeMAX-Web-proxy-service-for-filtering/ta-p/684781 http://ahmeddirie.com/technology/networking/url-filtering-and-blocking-crap-with-vyatta/ Basically the router runs Vyatta, which is built on top of DebianSqueeze, you have full control over it and can install whatever you like from any repository. I even installed MySQL on it to test it out, handled my small XBMC databases just fine. I ended up removing it because I didn't want my router to do anything but route.

-

I ended up getting a Ubiquti Edge Router Lite, $100 and runs a dual core 500MHz MIPS processor. Processes one million packet-per-second, runs a custom OS named Edge OS which is based on the tried and true Yvatta. Full CLI access over SSH and Serial, and the web interface is gorgeous with full AJAX compatibility and CSS3 styling. http://www.ubnt.com/edgemax#edge-router-lite I freaking love this little thing. .

-

This is one of those long standing issues I have with Scanner/DP, sorting by preceding 0 should really be native, I shouldn't have to do it.

-

Sorry, I should be more clear, I don't care for the 32-bit/64-bit difference, that's hardly the issue. My issue is that with Drivepool, I install the standalone executable, and I get both a fully integrated dashboard tab AND a standalone application. With Scanner I have to choose, do I want dashboard integration, or do I want the standalone application?

-

I recently upgraded from Windows Home Server 2011 to Server 2012 R2 Essentials and DrivePool provides a single executable that provides a standalone application, as well as Dashboard integration. Currently Scanner offers either/or, you can install the executable and get the standalone version, or install the wssx file and get Dashboard integration. Additionally, DrivePool and Scanner have different presentation UIs will this be synced? As a separate note, can you fix the title of the Scanner window to be just "SCANNER" or something? It looks weird to have a properly cased tab in-between the rest fo the all-caps tabs in 2012 R2 essentials.

-

1. Yes, once DP notices that you only have one copy of something you have marked for duplication (2x), it will make a copy of it on the other drive. 2. I believe that DP uses the timestamp on the file to determine which one is the "master", in this case the newer file will become the master.

-

The answer to most all your questions is yes. Just install WS2012E, install DrivePool connect your drives and voila! It just works. Only thing that may not work is the license, technically you are supposed to release the licence on the old machine first, but since it just bombed out on you, send a note to Alex and he can release the old licence for you. I've done this half a dozen times.

-

Yep, I do this periodically on my pool drive to remove the temp files after a hard reboot or a crash. del /s /f /q *.copytemp