fluffybunnyuk

Members-

Posts

25 -

Joined

-

Days Won

2

fluffybunnyuk last won the day on May 3 2021

fluffybunnyuk had the most liked content!

Recent Profile Visitors

The recent visitors block is disabled and is not being shown to other users.

fluffybunnyuk's Achievements

Member (2/3)

3

Reputation

-

igobythisname reacted to an answer to a question:

OK to do multiple drive add/removes at the same time?

igobythisname reacted to an answer to a question:

OK to do multiple drive add/removes at the same time?

-

Thanks i'll open a support ticket, as it adds to new drives added to pool correctly, but doesnt remove data from overfilled drives.

-

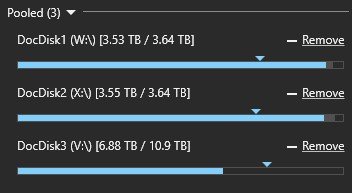

ok, so i disable all plugins except disk space equalizer set to 80%.... the diamonds that mark the rebalance size move to the right location....i.e. 80%... then nothing happens... 3:19:03.2: Information: 0 : [FileMover] Nothing to move from source=DocDisk2 (X:\), we're done. 3:21:05.0: Information: 0 : [Rebalance] (ToMoveRatio=0.9503) 3:21:05.0: Information: 0 : [CalculateBalanceRatio] Calculated ratio: 1.0000 I dont even think either of those ratios reported are correct. I've re-started, re-measured, re-balanced i've run with debug mode, and gone through all the logs. And for whatever reason it decides that because theres a W/X mirror going on, then its not going to bother moving data out to V. As far as its concerned because the mirror is intact, its got no interest in freeing up disk space.

-

Cant for the life of me get the smaller drives to offload data to the big drive. Stuff is W/X mirrored, and it should be moving to W/V or X/V mirror. I set the equalisation to 80% drive capacity, and stuff doesnt move... just aggravates me with building a bucket list... 0:35:14.9: Information: 0 : [Rebalance] Unprotected: 0 B / 12,003,767,819,797 B (0.00%), Delta = 0 B, Limit = 1.0 0:35:14.9: Information: 0 : [Rebalance] Protected: 9,208,741,611,396 B / 12,003,767,819,797 B (76.72%), Delta = 1,645,611,121,007 B, Limit = 1.0 0:35:14.9: Information: 0 : [Rebalance] 3d54470a-024d-4d97-8944-860a4e80e6f3 (DocDisk2 (X:\)): Fill = 3,069,219,330,888 B / 4,000,785,100,800 B (76.72%) 0:35:14.9: Information: 0 : [Rebalance] Unprotected: 0 B / 4,000,785,100,800 B (0.00%), Delta = 0 B, Limit = 1.0 0:35:14.9: Information: 0 : [Rebalance] Protected: 2,935,598,234,456 B / 4,000,785,100,800 B (73.38%), Delta = -831,259,473,080 B, Limit = 1.0 0:35:14.9: Information: 0 : [Rebalance] 53caeb3e-e60e-42a3-86e1-7e87bcdc88d0 (DocDisk1 (W:\)): Fill = 3,069,219,330,886 B / 4,000,785,100,800 B (76.72%) 0:35:14.9: Information: 0 : [Rebalance] Unprotected: 0 B / 4,000,785,100,800 B (0.00%), Delta = 0 B, Limit = 1.0 0:35:14.9: Information: 0 : [Rebalance] Protected: 2,981,921,134,923 B / 4,000,785,100,800 B (74.53%), Delta = -814,351,647,930 B, Limit = 1.0 0:36:23.5: Information: 0 : [CalculateBalanceRatio] Calculated ratio: 0.8912 Its driving me crazy plugins DUL, DSE, all-in-one should be moving 800GB off W/X but just doesnt.

-

Hi, I've had it running happily for over a year, But in the last week i've had it tell me it needs license transferring. So i transfer the license. It says ok.... then 3 days later tells me theres a license problem. Any ideas?

-

i use dpcmd check-pool-fileparts F:\ 4 > check-pool-fileparts.log to fix it. Then i read the log, and if theres no issues other than with drivepools record, i recheck duplication, and it goes back to what i'd expect 19.6TB duplicated, 8.99mb unusable for duplication for that pool. For me its more to do with drivepools index developing an issue rather than there being an actual duplication issue. But thats why i check the dpcmd log to make sure. I use notepad++ to open the logfile, and search /count "!" , "*", and "**" Usually it reports no inconsistencies at the end. At least i've never had one outside of when testing, and deliberately trying to trash a couple of test drives.

-

I had an inconsistency in my duplication, and use dpcmd to fix it. This is why i bought the software. It works, and is reliable . Drivepool that is. Cause: Modifying all drives involved in duplications file tables at the same time... Really dumb thing to do i know. But i only have a short maintenance window ,and pushed things to fit the time. So just dropped by to say thanks to the author for the work done to make it reliable.

-

You could set it to balance every say 168 hours. That'd give you a week until the next balance runs.

-

File Recovery Tools compatible with DrivePool?

fluffybunnyuk replied to jhhoffma3's question in Nuts & Bolts

A good slightly techincal hack to avoid this is to create/use an account with the delete permission for deleting, and remove delete permission from the limited account you use for general use or drivepool access. All my files across the drivepool have "normal-user account", and i have a special account just for deletion. So when i want to delete i have to provide credentials to authorize the delete. It makes whoops where did my data go moments a little harder. Naturally its a custom permission, and i hate custom permissions as they sometimes go bad... and mostly get annoying. -

OK to do multiple drive add/removes at the same time?

fluffybunnyuk replied to bnhf's question in General

If your removing a drive from pool A, and a drive from pool B then yes. Same pool, then probably no... chances are if you remove 2 drives from same pool, a certain law dictates you'll pull 2 with the same data on, and lose the data Slow is good if the data remains intact. Me, i always yank one drive, and see where i stand before pulling another. -

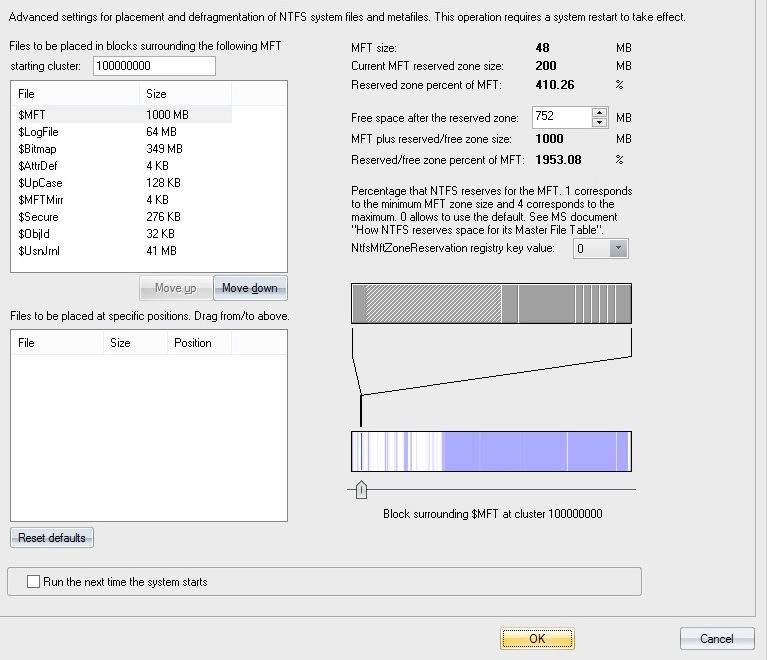

Thats never going to happen with any software like drivepool. Theres a write read overhead(balancing) that multiplies with fragmentation. My best recommendation is assuming your using large core xeons or threadrippers, is to open the settings.json. Change all the thread settings upto 1 per core, to allow all cores to scoop I/O from the SSD. Give that a whirl. If that doesnt shift it. Try defragging the mft, and providing a good reserve so the balancing has a decent empty mft gap to write into. My system reports the SSD balancer is slow as a dog which is why i uninstalled it. Its a cheap version of sas nvme support, except instead of the controller handling the nvme I/O spam, the cpu does. (Which isnt a good idea the larger the system gets). Have you tried using sym links, and using a pair of ssd per symlink so your folder structure is drives inside drives i.e. C:\mounts\mountdata(disk-ssd)\mountdocs(disk-ssd);C:\mounts\mountdata(disk-ssd)\mountarchive(disk-ssd)\; So your data is transparently in one file structure but chunks are split over seperate drivepools. So the dump from the ssds are broadly in parallel? I have to set aside 2 hours/day for housekeeping to keep drivepool in tip-top condition. Large writes rapidly degrade the system for me from the optimal 200mb/sec/drive+ at the moment its running at 80mb/sec/drive (which is the lowest i can afford to go) mostly because i've hammered the mft with writes, and theres a couple of tb i need to defragment. I wish you all the best trying to resolve it.

-

8 drives in raid-6 gets me 1600mb/sec and saturates a 10gbe, it allows me to write that out again at 800mb/sec to my backing store (4 drives so less speed). No write cache ssd in sight. Granted i'm not on the same scale as you, but i'm not far off with 24 bay boxes. But then my network, and disk i/o is offloaded away from the cpu. With that many drives, ssd just sucks cos as soon as it bottlenecks it bottlenecks bad usually when it decides to do housekeeping. I'd retest using 8 drives in hardware raid-0 (I/O offloaded), and use drivepool to balance to a pool of 4 drives. See what happens. I think the SSD with its constant cpu calling saying I/O is waiting is gimping your system. Fun fact: A good way to hang a system is to daisy chain enough nvme to constantly keep the CPU in a permanent I/O wait state.

-

At 6TB/day regular workload i'd be recommending 6 or 8 drive hardware raid with nvme cache, with a jbod drive pool as mirror, if like me you want to avoid reaching for the tapes. A 9460-8i is pricey (assuming using expander) but software pooling solutions dont really cut it with a medium to heavy load. Hardware raid is just fine so long as sata disks are avoided, sas disks have a good chance of correction if T10 is used. I've had the odd error once in a blue moon during a rebuild that'd have killed a sata array , whereas in the sas drive log it just goes in as a corrected read error, and carries on. I cant afford huge sas spinning rust, its very expensive.... so i have to compromise.... i run sas drive raid, and back it with large sata jbod in a pool. So its useful in a specific niche use case. In my case sustaining a tape drive write over several hours. The big problem with drivepool is the balancing , and packet writes, and fragmentation. All those millisecond delays add up fast. What i've said isnt really any help. But what you wrote is exactly why i had to bite the bullet and suck it up , and go sas hardware raid.

-

Ultimate defrag has a boot time defrag for drive files like $MFT etc I go into tools/settings and then click the boot time defrag option. I set the starting cluster to 100000000 (12tb disk)(you can set your own location but 5% in seems reasonable for 0% to 10% track seek for writes, 10% into the disk would be optimal(0-20% seek) .and leave the order as the default. I change the free space after the reserved zone to a reasonable size (in my case 752mb to round it to a 1GB MFT) I click the run the next time the system starts box and click ok. Naturally a warning needs to be attached to this. Your playing with your disks file tables.... It can go really bad (unlikely but possible if your system is unstable). So to mitigate that risk a chkdsk /F MUST be run on the drive BEFORE. And preferably the disk needs as little fragmentation as possible( preferably a complete fragmented file defrag pass first).. As can be seen from the pic. Most of my data is moved to the rear , so all my writes happen at the start of the disk near the MFT, this means the head doesnt move too far when updating the MFT. Contiguous Reads i dont care about (i dont need speed other than pesky tape drive feeding), and writes are crucial to me to be executed asap since i dont use cache ssd.

-

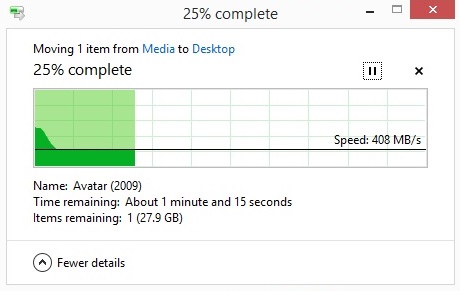

For me a pair of 12tb got me 400mb/sec which is decent enough for me to dump the ssd cache (i found it killed the speed). 15 disks in a pool should blow away 4 ssd drives in straight line speed. I write 1TB/hour doing a tape restore.

-

9207-8(i or e) sas2 pcie 3 is great for low amount of use. These are cheap (IT mode). A cheap intel sas2 expander gets a system off to a good start. Later on when usage ramps up then:- 9300 or 9305 sas3 keeps the controller cooler, and doesnt bottleneck so easy with alot of drives. These arnt cheap, but can be found for reasonable prices. Eventually backup to tape(rather than cheap mirrors) gets important:- Hardware raid-6 sas3. These are the definition of very expensive, but do keep a tape drive sustained fed at 300mb/sec(raid 6 part of pool) while plex is reading 50gb mkv files for chapter thumbnail task(jbod mirror part of pool). A 9440-8i(sas drives only with T10-PI) with sas3 expander is a wonderful thing in action. Also avoid cheap chinese knock-offs. It amuses me how people spend thousands of pounds on cheap drives, and refuse to pay £300 for the controller.