darkly

Members-

Posts

86 -

Joined

-

Last visited

-

Days Won

2

darkly's Achievements

Advanced Member (3/3)

4

Reputation

-

Any word on this yet?

-

I have to toss in a vote for allowing for multiple cache drives as well, although for different reasons. I'm not really having a I/O issue, but rather, I'd like to be able to transfer large amounts of data to my CloudDrive, such that Windows/applications see the file as being ON the drive, and leave that uploading in the background for however long it takes to actually move the data to the cloud. It's a pain when my single cache drive reaches max capacity and I can only make files visible on the drive at throttled speeds.

-

Is this still true? I'm looking at the CloudPart folder in one of my cache drives and I'm seeing over 5000 files in that directory. Edit: Does this apply to data you have queued to upload as well? For instance could I not use a pool of a small SSD and large HDD as a cache to increase the total amount of data I can "transfer" to the CloudDrive and leave it to upload in the background?

- 8 replies

-

- clouddrive

- drivepool

-

(and 1 more)

Tagged with:

-

I have two SSDs that I want to use as a cache for my CloudDrive. This system will be writing extensively to this CloudDrive so I need it to be durable and reliable over the long term, especially when I neglect to monitor the health of the individual drives. I was considering either using a RAID 1 between the two drives; or pooling the two together using DrivePool and either duplicating everything between the two drives, or utilizing both drives without duplication to spread out the wear. I have Scanner installed as well to monitor the drives, and it could automatically evacuate one of the drives (in the non-duplicated DrivePool) case if it detects anything. Speed isn't as important to me as reliability, but I don't want to unnecessarily sacrifice it either. Which option do you think is preferable here? RAID 1 DrivePool w/ Duplication DrivePool w/o Duplication + Scanner

-

I want to set something up a particular way but I'm not sure how to go about it, or if it's even possible. Maybe someone with more experience with more complex setups could offer some insight? Say I have two separate CloudDrives, each mounted to their own dedicated cache drives (let's call them drives X and Y). Both X and Y are SSDs. Let's also say I have the target cache size for each CloudDrive set to 50% of their respective cache drive's total capacity; for instance, if drive X is 1 TB, the CloudDrive mounted to it has its target cache size set to 500GB. This means (ignoring the time it takes to transfer and any emptying of the cache that happens during that time) at any point I can upload 500GB to that drive before the cache drive is full and I have to wait to upload more. We'll come back to this in a second. I also have a large number of high capacity HDDs. I plan to pool them in DrivePool. Let's assume I have 4 10TB HDDs. I could pool these into two sets of two. Each pool would then have a total capacity of 20TB. Now I want to use these HDDs as sort of a "cache overflow" for the CloudDrives. In other words, if I attempt to transfer data onto the CloudDrive that exceeds the respective cache drive's available capacity, the data in excess would be stored on the HDD pool instead. Now obviously this is quite simple if I do what I just stated and make two separate pools of HDDs. I can then pool each HDD pool with one SSD and be done. Using the example sizes given previously, that would then give each CloudDrive 20.5TB of unused space available to cache any uploads. However, this doesn't seem very efficient for me, mainly due to one factor. I'm rarely writing to both CloudDrives at the same time. What would be far more efficient is if I could put all the HDDs into one pool, and then put that one pool into two pools simultaneously. One pool with the HDD pool + Drive X, and another pool with the same HDD pool + Drive Y. Unfortunately, this is not possible, as DrivePool only allows drives (and pools) to be in one pool at a time. If this were possible though, that would give each CloudDrive potentially up to 40.5TB of unused space immediately available for caching. One potential workaround I was considering (but have not attempted yet) is to first pool all the HDDs together, and then to use CloudDrive in Local Disk mode to create two CloudDrives, both using the HDD pool as the backing media (rather than a cloud storage platform). Then pooling each CloudDrive with an SSD, and finally using the resulting pools as the cache for each of the two original CloudDrives. The main thing that worries me about this approach though is excessive I/O and how much that would bottleneck my transfer speeds. It seems like an excessively overkill approach to this very simple problem, and I seriously hope there's a simpler workaround. Of course, this could be a lot easier if DrivePool allowed drives to be in multiple pools, and I'm not quite sure why this limitation exists in the first place, since it looks like the contents of a DrivePool are just determined by the contents of a special folder placed on the drive itself. Couldn't you have two of those folders on a drive? Maybe someone can enlighten me on that as well. I hope I've been clear enough in describing my problem. Anyone have any thoughts/ideas?

-

I can second this. Been using beta .1316 on my Plex server for months now and things have been running smoothly for the most part (other than some API issues that we never determined the exact reason for but those have mostly resolved themselves). The only reason I brought any of this up is I'm finally getting around to setting up CloudDrive/DrivePool on my new build and wasn't sure what versions I should install for a fresh start. Looks like I'll just stick with beta .1316 for now. Thanks!

-

confusing cuz v1356 is also suggested by Christopher in the first reply to this thread. Also the stable changelog doesn't mention it at all (https://stablebit.com/CloudDrive/ChangeLog?Platform=win), and the version numbers for the stables are completely different right now to the betas (1.1.6 vs 1.2.0 for all betas including the ones that introduced the fixes we're discussing).

-

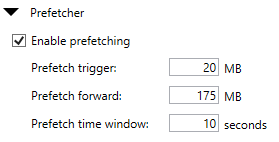

Are you using this for plex or something similar as well? I've been uploading several hundred gigabytes per day over the last few days and that's when I'm seeing the error come up. It doesn't seem to affect performance or anything. It usually continues just fine, but that error pops up 1-3 times, sometimes more. My settings are just about the same with some slight differences in the Prefetcher section. I've seen a handful of conflicting suggestions when it comes to that here. This is what I have right now: Don't think that should cause the issues I'm seeing though... Are you also using your own API keys?

-

I should probably mention I'm using my own API keys, though I don't see how that should affect this in this way (I was using my own API keys before the upgrade too). I'm also on a gigabit fiber connection and nothing about that has changed since the upgrade. As far as I can tell, this feels like an issue with CD.

-

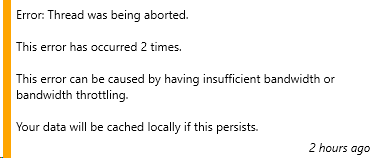

I'm noticing that since upgrading to 1316, I'm getting a lot more I/O errors saying there was trouble uploading to google drive. Is there some under the hood setting that changed which would cause this? I've noticed it happen a few times a day now where previously it'd hardly ever happen. Other than that, not noticing any issues with performance. EDIT Here's a screenshot of the error:

-

I had the same experience the other night. I'm just worried about potential issues in the future with directories that are over the limit as I mentioned in the comment above. Overall performance has become much better as well for my drives shared over the network (but keep in mind I was upgrading from a VERY old version so that probably played a factor in my performance previously).

-

Are there any other under the hood changes with 1314 vs the current stable that we should be aware of? Someone mentioned a "concurrentrequestcount" setting on a previous beta? What does that affect? What else should we be aware of before upgrading? I'm still on quite an old version and I've been hesitant to upgrade, partly cuz losing access to my files for over 2 weeks was too costly. Apparently the new API limits are still not being applied to my API keys, so I've been fine so far, but I know I'll have to make the jump soon. Wondering if I should do it on 1314 or wait for the next stable. There doesn't seem to be an ETA on that yet though. Afterthought: Any chance google changes something in the future that affects files already in drive that break the 500,000 limit? Could a system be implemented to move those file slowly over time while the clouddrive remains accessible in case something does get enforced in the future?