-

Posts

14 -

Joined

-

Days Won

1

chaostheory last won the day on July 20 2017

chaostheory had the most liked content!

Recent Profile Visitors

2700 profile views

chaostheory's Achievements

Member (2/3)

3

Reputation

-

chaostheory reacted to an answer to a question:

Current development

chaostheory reacted to an answer to a question:

Current development

-

Of course, but it is necessary to know before using the software anymore. Whatever is the case, if there's nobody to maintain the code then slight change by cloud provider could break functionality (maximum files per folder @ google anyone?) or drivepool could stop working completely in another Windows update and we will be left in the dust which sucks.

-

It's time to come clean @Christopher (Drashna). Think we paid for the software so we deserve the truth. If something happened to Alex we should know, it doesn't matter if he caught covid, was run over by the bus or simply is not interested in developing the software anymore, because the outcome is the same :/

-

chaostheory reacted to an answer to a question:

Google Drive: The limit for this folder's number of children (files and folders) has been exceeded

chaostheory reacted to an answer to a question:

Google Drive: The limit for this folder's number of children (files and folders) has been exceeded

-

Migration on my drives stopped and looks like it can't finish for now. Software was moving files into hierarchical structure yesterday, but it stopped and now I'm only getting this: 0:05:10.5: Warning: 0 : [ApiHttp:40] Server is temporarily unavailable due to either high load or maintenance. HTTP protocol exception (Code=ServiceUnavailable). In my service log.

-

You will be forced to.. eventually. I have 6 clouddrives, 50+ TB each, for sure more than 500k chunks in each of them. On sunday only one of those six drives was unable to write/upload data, rest was perfectly fine while uploading. This is on 2 different accounts mind you. I suspect Google is rolling out those API changes gradually, not on all accounts at once, but sooner or later everyone and every account will be restricted by this new 500k files per each folder rule.

-

[BUG] Google Drive throwing Internal Error on upload offset bigger than size

chaostheory replied to steffenmand's question in General

Same here. 13:43:05.8: Warning: 0 : [TemporaryWritesImplementation:414] Error performing read-modify-write, marking as failed (Chunk=131, Offset=0x00000000, Length=0x01400500). Internal Error 13:43:05.8: Warning: 0 : [WholeChunkIoImplementation:414] Error on write when performing master partial write. Internal Error 13:43:05.8: Warning: 0 : [WholeChunkIoImplementation:414] Error when performing master partial write. Internal Error 13:43:05.8: Warning: 0 : [IoManager:414] HTTP error (InternalServerError) performing Write I/O operation on provider. 13:43:05.8: Warning: 0 : [IoManager:414] Error performing read-modify-write I/O operation on provider. Retrying. Internal Error 13:43:09.6: Warning: 0 : [ApiGoogleDrive:414] Google Drive returned error (internalError): Internal Error 13:43:09.6: Warning: 0 : [ApiHttp:414] HTTP protocol exception (Code=InternalServerError). 13:43:09.6: Warning: 0 : [ReadModifyWriteRecoveryImplementation:414] [W] Failed write (Chunk:131, Offset:0x00000000 Length:0x01400500). Internal Error Endless uploading of last 45 MB to upload. It didn't finish since yesterday. -

chaostheory started following Drive is performing recovery forever... and Specific Balancing settings

-

Hello, I'm thinking about adjusting my balancing settings, but can't seem to find the option I want. I have SSD Optimizer with 1 TB of space (2x 1 TB SSD in RAID1) and 300 TB pool behind it. Right now my Balancer settings are as follows: Automatic Balancing Balance every day at 00:30 Automatic Balancing - Triggers If the balance ratio falls below: 90% [x] Or if at least this much data needs to be moved: 100 GB The thing is, this doesn't satisfy my needs. There are days when I write few gigabytes to the pool and there are days I'm writing 700 GB to the pool. 2 questions: A ) Is there any setting that will allow me to always keep my SSD Optimizer partition at 50% full? That means last 500 GB written of my data always remains on SSD partition. If I write additional 250 gigabytes and my SSD partition has 750 GB of data, then automatic balancing at 00:30 only moves the data to the 300 TB pool until there's 500 GB left on SSD partition and leaves rest of the data on SSD. If it's not possible then maybe plan B: B ) Balance every day at 00:30, but only balance 300 GB per night, no matter how much free space is left on SSD partition. Best regards

-

Google Drive is suddenly marked as experimental provider?

chaostheory replied to red's question in General

srcrist, I think we can be cautious and we paid for that software so we have the right to know, preferably, directly from developer what it all means. I am concerned, because the last time provider got marked as experimental it was beginning of the end, not just for API key, but for data for a lot of people. ACD, am i right? This warning inside the software is vague imo. Anyway, this Google API request is conducted at Google Cloud Platform. Is this free? -

Google Drive is suddenly marked as experimental provider?

chaostheory replied to red's question in General

And what does "approaching usage limit" means? If it means one day our CloudDrive will not be accessible all of sudden then I think we deserve to know in advance. -

chaostheory reacted to a question:

Google Drive is suddenly marked as experimental provider?

chaostheory reacted to a question:

Google Drive is suddenly marked as experimental provider?

-

chaostheory reacted to an answer to a question:

File System damaged - The "Repair Volume" window does not open

chaostheory reacted to an answer to a question:

File System damaged - The "Repair Volume" window does not open

-

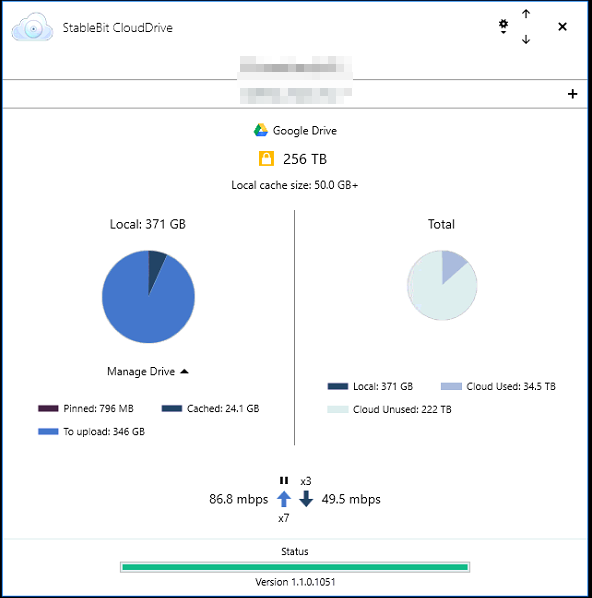

Hello guys, So... I downloaded some linux iso's, copied it to my clouddrive (400+ GB of data) and left the PC overnight. It was uploading at ~80 Mbps. The next morning I'm coming up, checking on it and software is kind of... stuck. It says there's 346 GB left to upload. It's uploading at 100 Mbps and... this really never changes. I am already uploading 100 Mbps for few hours straight and CloudDrive still says it needs to upload exactly 346 GB of data. Meanwhile my cfosspeed reports I have uploaded about 250+ GBs of data and the "To upload" counter hasn't moved a single bit. I am confused. Any help?

-

Hello guys, So, I came home from work today and found out that there was power in my area. I turned on my devices and my PC booted without any problem. After launching Clouddrive I got a message: "This drive is performing recovery" and "Recovering..." on bar, at the bottom. So... I had a local cache of about 50 GB and on RAID5 partition and clouddrive's reading data at 240 MB/s constantly. So far it read 3,54 TB and counting... can't see the end of it, it's still recovering and it got me nowhere close to mounting my drive. What can I do except waiting? It already took hours... Can't I just decide I don't care about local cache recovery (there was nothing important for sure) and just mount the drive without recovering? Chunks are still on my GDrive so, theoretically, drive should be fine.

-

KiaraEvirm reacted to a question:

Help moving 6,5 TB from 1 GDrive to... the same GDrive

KiaraEvirm reacted to a question:

Help moving 6,5 TB from 1 GDrive to... the same GDrive

-

Help moving 6,5 TB from 1 GDrive to... the same GDrive

chaostheory replied to chaostheory's question in Providers

So.. I have already tried that. Created VM with 4 vCPU cores, 6 GB of RAM, 100 GB SSD, Windows Server 2016. Installed Stablebit Clouddrive right away, mounted my Plex drive on VM and tried downloading a single movie with copy-paste method. Getting 30 GB/h at best I wonder what's wrong there... Currently shut down VM, there's no point migrating until I fix it. -

Help moving 6,5 TB from 1 GDrive to... the same GDrive

chaostheory replied to chaostheory's question in Providers

Wow, you already know so much. Reading that I think I will wait just a little bit more and see what happens next in your case, since I would burn through those "trial" $300 pretty quickly running these tests I guess. -

Antoineki reacted to a question:

Help moving 6,5 TB from 1 GDrive to... the same GDrive

Antoineki reacted to a question:

Help moving 6,5 TB from 1 GDrive to... the same GDrive

-

jaynew reacted to a question:

Help moving 6,5 TB from 1 GDrive to... the same GDrive

jaynew reacted to a question:

Help moving 6,5 TB from 1 GDrive to... the same GDrive

-

Hello guys, I found myself in pretty tough situation. When I first heard about StableBit CloudDrive and unlimited GDrive, I wanted to set-up my "Unlimited Plex" as fast as possible. This resulted in creating a cloud drive with half-assed settings, like 10 MB chunk size and so on. Now that I know this is not optimal and it's too late to change chunk size, I need to create another CloudDrive with better settings and transfer everything to it. Downloading it and uploading using local PC will take forever and I want to avoid that. I was thinking about using Google Cloud Compute, so my PC won't have to be turned on and more importantly -> there won't be a problem with uploading 6,5 TB again which, on my current connection, will take roughly 2 weeks. That's assuming it will go smoothly without any problems. Is there any guide how to do this? I don't mind if it's reasonably paid option also. Any help?