jak64950

Members-

Posts

19 -

Joined

-

Last visited

-

Days Won

1

jak64950 last won the day on June 4 2018

jak64950 had the most liked content!

jak64950's Achievements

Member (2/3)

1

Reputation

-

Thank you, sometimes the complexity of the software goes over my head.

-

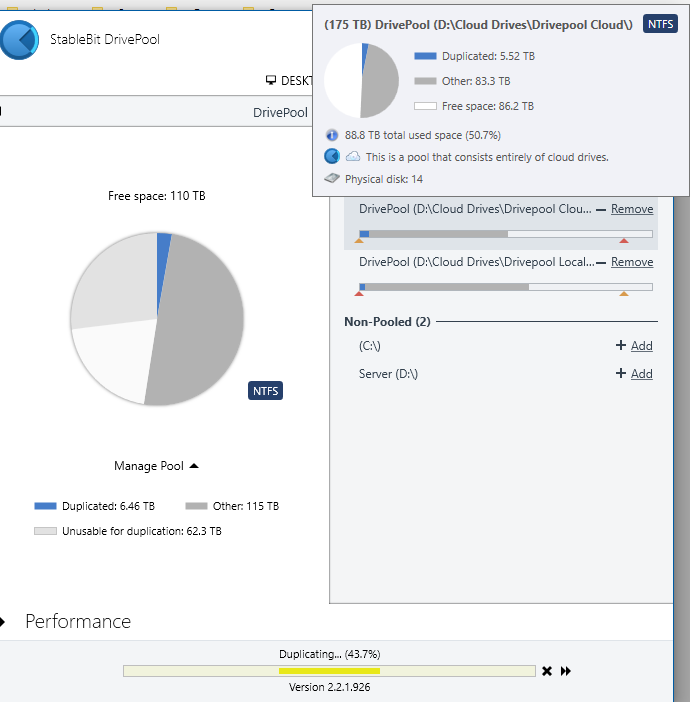

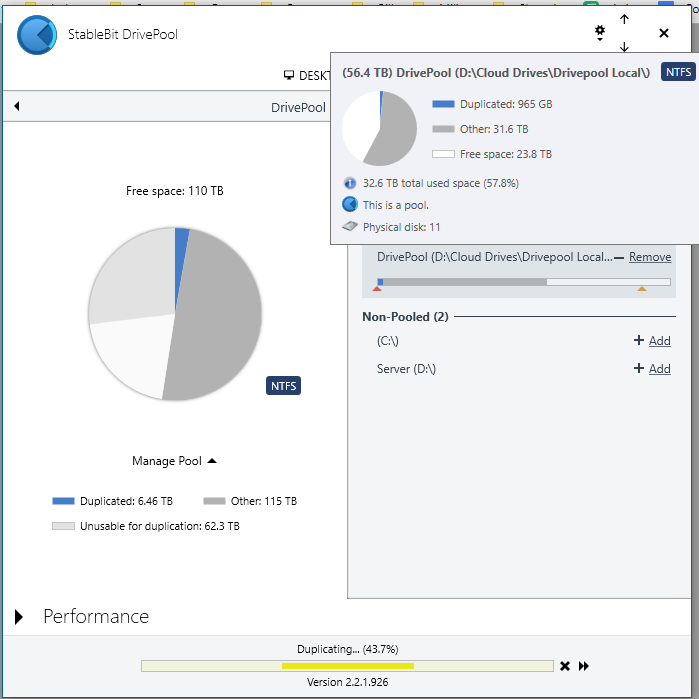

Well, I'm kind of an idiot. I guess I never remeasured after moving all my files into Drivepool. Now both pools are showing the exact same duplication (which I guess makes sense since each file is duplicated across each other) and Drivepool is no longer overwriting my files. I guess my final question is, the local pool is showing a small amount of unduplicated files (like 20GB). Since the local pool should only hold duplicated files, will Drivepool balance this unduplicated data onto the cloud pool? And follow up, if I disable duplication, will Drivepool delete the cloud copy and have to rebalance later, or does it know to delete the copy on the local pool?

-

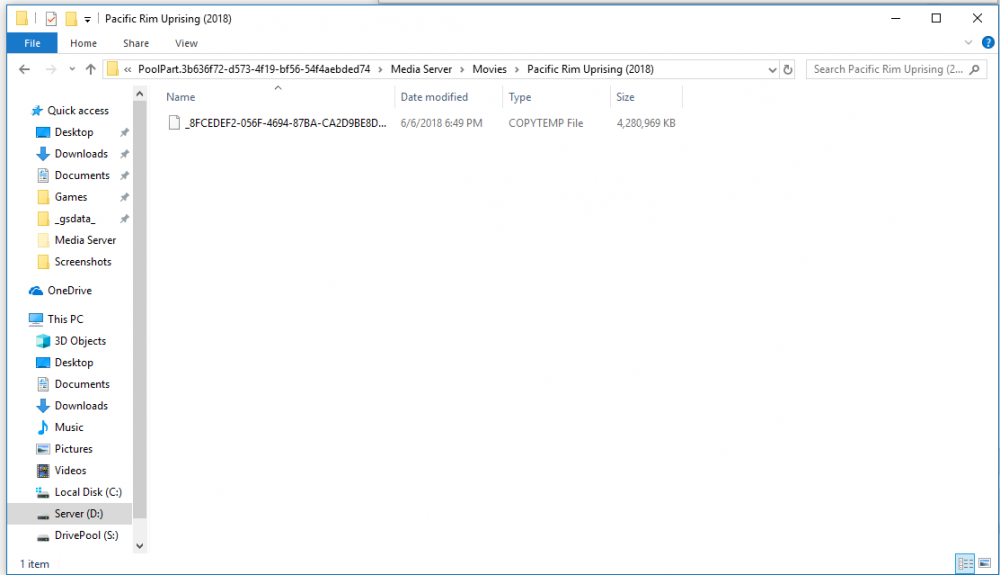

So I have the main folder (Movies) set to x2 duplication and I want about 2000 items within that folder set to no (x1) duplication. The terminal verifies the duplication count is changed but this doesn't seem to be the case. I closed and reopened the UI and still no change.

-

Is this working correctly? I typed in dpcmd set-duplication "S:/Media Server/Movies/xXx (2002)" 1 Response: Duplication count set to 1 on '\\?\S:\Media Server\Movies\xXx (2002)\'. It doesn't update in the UI though. What would be the format for multiple folders?

-

Ok, images attached. What I am asking is, shouldn't all the "duplicated" files be in the Cloud Pool? I know you've said somewhere that there really are no duplicates, but if/when I disable duplication Drivepool will have to decide which pool to delete one copy from. As long as I have the usage limiter for all unduplicated files be on the Cloud pool, then it should delete the copy on the local pool? As for the overwriting, as you can see it is making a duplicate on my local pool even though that exact file was already there (duplicated with goodsync vice drivepool), if that makes sense. In other words, every file I want duplicated already exists on both cloud pool and local pool, but (even after placing all the files in the hidden folders) Drivepool will still overwrite existing files to make duplicates. Again, this isn't a big deal.

-

Yes, multi-select would be awesome. I have 4 10TB IronWolf's and they are great...just a tad expensive.

-

I primarily use newsgroups.

-

jak64950 started following Request -- Better Duplication Settings and Duplication Deleting from CloudDrive

-

Hello, So I have Drivepool and CloudDrive set up with my local pool and CloudDrive pool under a "master" pool so that a have one copy on the cloud and certain copies on my local storage. On the master pool, I have the local pool checked for only duplicated data, and the CloudDrive pool checked for only unduplicated data. Before I set up Drivepool's duplication, I was using GoodSync to sync any data I wanted to be saved locally. Drivepool apparently has to make its own "duplicate" as it cannot tell one already exists, which is fine, as it just overwrites my old file. When looking at the master pools duplication settings, it says something like 2TB duplicated data on the CloudDrive pool and 500GB duplicated data on the local pool. Does that mean if I disable duplication on the files contained in that 500GB, it will delete the "duplicate" from my CloudDrive instead of local? This is definitely not wanted as I would have to re-upload any deleted data. Maybe I overlooked a setting?

-

@Dave Hobson I am in fact using stablebit clouddrive. I'm pretty sure I could still do what you are mentioning but it would kinda complicate my system. I actually have two plex servers, one on linux (plexdrive) and one on windows (Stablebit CloudDrive), each hosting from different cloud accounts which I sync using goodsync. Adding extra folders and moving stuff around would mess with my scripts on linux and throw everything off. Thanks for the suggestion though.

-

red reacted to a question:

Request -- Better Duplication Settings

red reacted to a question:

Request -- Better Duplication Settings

-

Hello, So I'm running a 100TB plex server with only 60TB of local storage and would like to duplicate only certain folders from my clouddrives to local. So for example let's say I want 1000 of my 3000 movies on the local storage. As far as I can tell (and what I've been doing) is going one by one through each folder and setting duplication. Even just being able to select multiple folders or having a config file would be a great improvement to the current system.

-

If you want to downgrade, uninstall stablebit clouddrive and re-install the version you want.

-

I have the problem of having to re-index after reboot too. Takes ~2 hours to index for me.

-

I'm unable to mount my cloud drive after upgrading to .822. It starts indexing files, but errors saying "There was an error communicating with the storage provider. An entry with the same key already exists." I'm using google drive. I've tried reauthorizing and restarting my computer but it does the same thing.

-

I use google drive to stream plex from. I have fios 150/150 internet. 100mb chunk size 20 download/upload threads Prefetch: Trigger-1mb, forward-100mb, window-600sec (also have used 50mb forward and 300sec window with no problems) 20GB cache size This setup works well for me

.thumb.png.9fc583e2ac33b4616bb1070d146c27a4.png)