-

Posts

48 -

Joined

-

Last visited

-

Days Won

1

Posts posted by JulesTop

-

-

Update for me.

My 55TB are also complete. About 5TB/day.

Drive is currently operating normally.

-

20 minutes ago, Simon83 said:

I use .1307 and i am by 19% after about 24h. Never had a error like this, but I had nothing better to do, than update to the latest beta and know I hate myself.

You think, that deleting the cache would speed it up?

I would not delete the cache. I have a 270TB drive with 55TB used and on 1307 I am upgrading at about 10% per 24 hour period. Which is faster than before.

I would stay the course if I were you.

-

1 hour ago, sausages said:

Well I went to bed leaving the process running - last I checked, it showed it was about 15% done. Woke up this morning, and now it indicates it is 2% done... Not going to lie, this seems very glitchy.

EDIT: I checked the service log and noticed that it was throwing up the "Server is unavailable" error. I upgraded to 1306 which did away with these errors, and the progress counter is increasing as well. However, the Google Drive web interface doesn't show any file activity, and the service log doesn't show anything happening either (verbose tracing is on). I'm really disappointed in how poorly this migration seems to have been implemented, and concerned my drive is toast somehow.Same behaviour for me. I submitted a ticket today to support.

-

1 hour ago, Chase said:

Unintentional Guinea Pig Diaries.

Day 7 - Entry 1

OUR SUPREME LEADER HAS SPOKEN! I received a response to my support ticket though it did not provide the wisdom I seek. You may call it an "Aladeen" response. My second drive is still stuck in "Drive Queued to perform recovery". I'm told this is a local process and does not involve the cloud yet I don't know how to push it to do anything. The "Error saving data. Cloud drive not found" at this time appears to be a UI glitch and it not correct as any changes that I make do take regardless of the error window.

This morning I discovered that playing with fire hurts. Our supreme leader has provided a new Beta (1.2.0.1306). Since I have the issues listed above I decided to go ahead and upgrade. The Supreme leaders change log says that it lowers the concurrent I/O requests in an effort to stop google from closing the connections. Upon restart of my computer the drive that was previously actually working now is stuck in "Drive Initializing - Stablebit CloudDrive is getting ready to mount your drive". My second drive is at the same "Queued" status as before. Also to note is that I had a third drive created from a different machine that I tried to mount yesterday and it refused to mount. Now it says "drive is upgrading" but there is no progress percentage shown. Seems that is progress but the drive that was working is now not working.

Seeking burn treatment. I hope help comes soon. While my data is replaceable it will take a LONG TIME to do. Until then my Plex server is unusable and I have many angry entitled friends and family.

End Diary entry.

I'm enjoying your diary entries as we are in the same boat... not laughing at you... but laughing/crying with you!

I know that this must be done to protect our data long term... it's just a painful process.

-

1 hour ago, darkly said:

I don't have most of these answers, but something did occur to me that might explain why I'm not seeing any issues using my personal API keys with a CloudDrive over 70TB. I partitioned my CloudDrive into multiple partitions, and pooled them all using DrivePool. I noticed earlier that each of my partitions only have about 7-8TB on them (respecting that earlier estimate that problems would start up between 8-10TB of data). Can anyone confirm whether or not a partitioned CloudDrive would keep each partition's data in a different directory on Google Drive?

I don't believe that this is the case. Clouddrive would organize it all as one single cloudrive and everything would be in the same directory.

I do the same exact thing you do, but with 55TB volumes in one clouddrive, and they were all in the same folder. -

On 6/27/2020 at 2:42 PM, ezek1el3000 said:

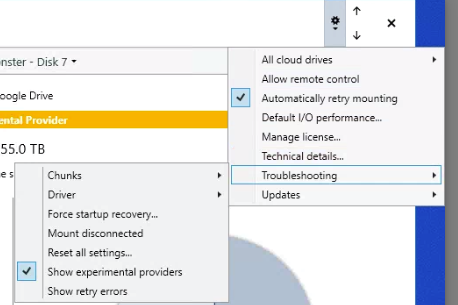

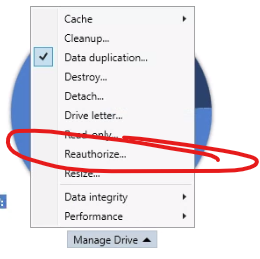

too late... still upgrading; slowly. Do these instructions still apply? Use your own Google Drive API keys

I don't think that applies because you can't 're-authorize' the drive once the process begins... at least no way that I can tell.

-

1 hour ago, Chase said:

on the drive that has completed the upgrade. I can see and access my data but when I try to change my I/O performance on that drive it tells me "Error saving data. Cloud drive not found"

EDIT: Prefetch is not working

Oh dear...

Did you submit a ticket to support? This will make sure @Alex can take a look and roll out a fix if it's on the software end. I don't think he's on the forums too often.

-

7 minutes ago, Chase said:

I have two drives with 10TB on one and 20TB on the other. I'm at about 17 hours in and one the smaller drive is at 58% and the bigger drive is at 30%.

How long did it take for you? Did everything work when it was done? I have a lot of large files (~7GB...media) but not many small files.

I'm currently at 12.02%, and I started on June 24th at 6:45am... so it's been over 2 days.

I have generally larger files... from 15GB to 60GB, but I'm not sure what affect that has.

-

2 hours ago, steffenmand said:

Is anyone having issues creating drives on .1305 ?

It seems to create the drives, but are unable to display it in the application!

I also notice that all of you get a % counter on your "Drive is upgrading"... mine doesnt show that - is there something up with my installation?

EDIT: A reboot made the new drive appear, but i can't unlock it. Service logs is spammed with:0:02:04.0: Warning: 0 : [ApiHttp:64] Server is temporarily unavailable due to either high load or maintenance. HTTP protocol exception (Code=ServiceUnavailable).

EDIT 2:

After ouputting verbose i can see:

0:04:42.8: Information: 0 : [ApiGoogleDrive:69] Google Drive returned error (userRateLimitExceeded): User Rate Limit Exceeded. Rate of requests for user exceed configured project quota. You may consider re-evaluating expected per-user traffic to the API and adjust project quota limits accordingly. You may monitor aggregate quota usage and adjust limits in the API Console: https://console.developers.google.com/apis/api/drive.googleapis.com/quotas?project=962388314550

As im not using my own API keys, the issue must be with Stablebit's

Did the drive updates make Stablebit's API keys go crazy?I was wondering if this would happen as more and more people begin the upgrade process...

The issue is, I don't think you can re-authorize the drive once the upgrade process begins... at least I don't see a way to do this. Otherwise, I would switch back to my own key.

-

5 hours ago, steffenmand said:

i would guess chunks just gets moved and not actually downloaded/reuploaded! only upload is to update chunk db i suspect

I'm going through the process now.

It started at 6:45am this morning and is currently at 2.62% complete... I think this will take some time. But I don't think it actually downloads/re-uploads the chunks, it just moves them.I have 55TB in google drive BTW.

Also, as far as I can tell, the drive is unusable during the process, and there is no way of pausing the process to access the drive content. However, it will be able to resume from where it left off after a shutdown.

Having said all that, I have to say thanks to @Alex and @Christopher (Drashna) for mobilizing quickly on this, before it becomes a really serious problem. At least right now, we can migrate on our schedule, and until then there is a workaround with the default API keys.

-

1 hour ago, Jellepepe said:

This issue appeared for me over 2 weeks ago (yay me) and it seems to be a gradual rollout.

The 500.000 items limit does make sense, as clouddrive stores your files in (up to) 20mb chunks on the provider, and thus the issue should appear somewhere around the 8-10tb mark depending on file duplication settings.

In this case, the error actually says 'NonRoot', thus they mean any folder, which apparently can now only have a maximum of 500.000 children.I've actually been in contact with Christopher since over 2 weeks ago about this issue, and he has informed Alex of the issue and they have confirmed they are working on a solution.

(other providers already has such issues, and thus it will likely revolve around storing chunks in subfolders with ~100.000 chunks per folder, or something similar.)It is very interesting that you mention reverting to the non-personal api keys resolved it for you, which does suggest that indeed it may be dependent on some (secret) api level limit.

Though, that would not explain why adding something to the folder manually also fails...

@JulesTop Have you tried uploading manually since the issue 'disappeared' ? If that still fails, it would confirm that the cloudflare api keys are what are 'overriding' the limit, and any personal access, including web, is limited.Either way hopefully there is a definitive fix coming soon, perhaps until then using the default keys is an option.

You called it. I just tried uploading a small file to the content folder directly from the Google drive web interface and got an 'upload failure (38)', same as before.

It looks like this restriction rollout is definitely happening. It will be fantastic if @Alex has a solution coming soon! But also good that the default keys are keeping us going for now.

-

On 6/20/2020 at 2:12 PM, kird said:

I'm glad you were able to fix that nasty error on your own. By the way, which version of CloudDrive are you using? I can tell you that in my case there is a version that I keep as a real gem because it is like the "holy grail" for all kinds of errors that the drive may involve. This version is v1.1.0.1051

I was on the latest stable (I think the last 4 digits we're 1249) when this occured. After a couple of days, I updated to the latest Beta to try and solve it.

Also, I realized that at some point I removed my personal gdrive API credentials from the config file, and this is about the time the issue resolved. I just put back my personal API credentials and re-authorized and the issue came back. I yet again switched back to 'null' (the default stablebit API credentials) and the issue went away again... I wonder if there are higher API limits with the Stablebit credentials.

Maybe @Christopher (Drashna) can shed some light.

-

So the issue has been "fixed". There must be a bug or something, but not sure if it's on Google's side or stablebit.

I deleted the file that I figured it was stuck trying to upload. The file I deleted was about 20GB, and there was over 65GB in queue to upload. As soon as I deleted the 20GB file from my computer (which was on the clouddrive), my upload queue seemed to just resume. It didn't drop by 20GB... It just resumed.

Anyway, everything seems to be totally back to normal.

-

Just now, kird said:

In your image I don't see any appreciation for having exceeded the limit. I wouldn't know what else to tell you. Let's wait and see what the developers say, it's a error which must be verified where it can come from and it's basic to know. I guess now your gdrive is not operational, right?

I can download from the gdrive without issue. I can also upload to the root of the gdrive without problem (manually). When I tried adding a file to the clouddrive content folder manually (via the gdrive web interface), it fails.

It looks like there is an issue with the content folder... Maybe @Alex or @Christopher (Drashna) can help.

-

1 hour ago, kird said:

That information gives you the error we talked about but it doesn't show anything of the total files/folders contained in a root folder. At least I can't see it.

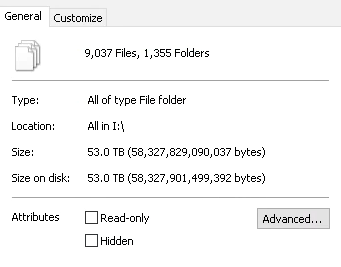

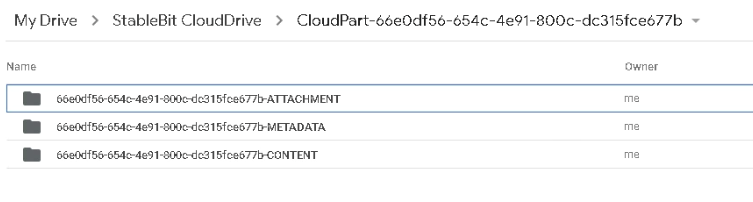

When I say look at your locally mounted unit (it's decrypted) I mean this. As you can see in the image it's still far away from the 500,000

BTW, I really appreciate the help. Here it is.

I wonder if there is a way of counting the number of chunks in google drive... since that is really what adds up

-

-

6 hours ago, kird said:

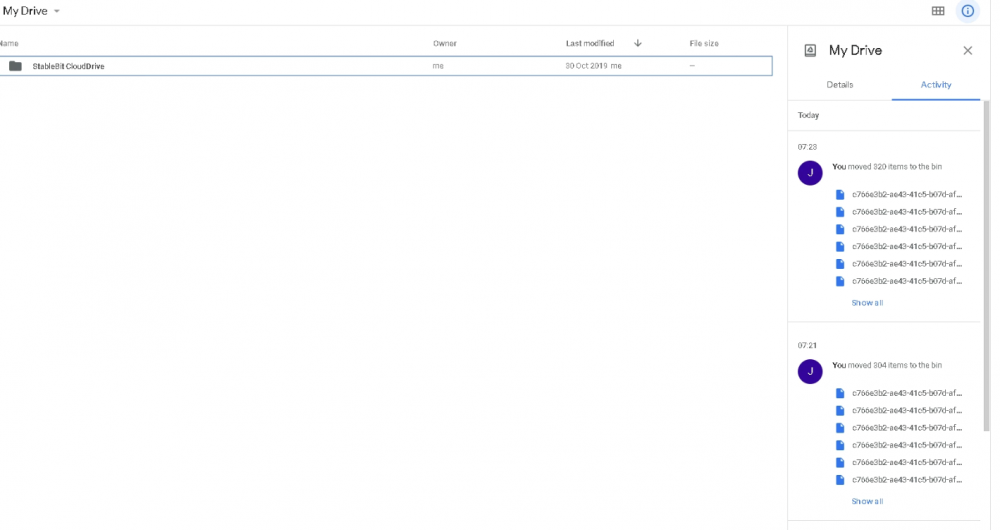

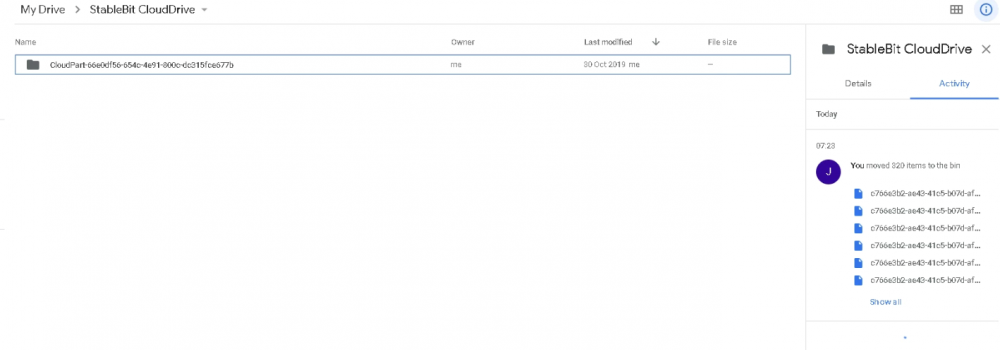

Yes, i was referring logically to the recycle bin of your gdrive account, the windows one here plays no role. As I understand it here the point is not the amount of Gb/Tb in files on the drive but the total number of folders that can be nested in the account.

If I understand correctly, according to this information the error occurs when it reaches 500.000 folders, files... inside a root folder in the drive.

"A numChildrenInNonRootLimitExceeded error occurs when the limit for a folder's number of children (folders, files, and shortcuts) has been exceeded. There is a 500,000 item limit for folders, files, and shortcuts directly in a folder. Items nested in subfolders do not count against this 500,000 item limit."

In that case... there is definitely something wrong... I don't believe I should be anywhere near that as Stabelbit is the only thing that manages my google drive. You can see in the attachment that my root directory only has one folder, and deeper to that, it's all managed by stablebit clouddrive.

-

6 hours ago, kird said:

Figuring it out, have you deleted the files from the recycle bin? If you have not configured it in a special way, by default the deleted files go there and if they are not deleted they still count for space.

My windows recycling bin? I went there and it is empty. Just in case, I just changed the settings for my cloud drive to permanently be deleted without being stored in the recycling bin.

I also went to my actual Google drive we interface, however, the bin is empty. I'm still having the issue... It's weird, I deleted a bunch of files and still have the issue. I have it setup with 20MB chunks... So my drive would likely have to be humongous to reach the file limit.

-

18 hours ago, kird said:

I know Googledrive has a file and folder limit, but now I don't know what the number is. In my case I'm not having, for now, this error but due to the large amount of folders and files I have on my drive I don't think I'll have much left to overcome it. Good news that our DriveCloud will warn us about that error.

I doubt I hit my file and folder limit. The reason I say that is that I deleted a few folders and their files, and nothing changed. I still get this error and my uploads are hanging.

I'm wondering if this is happening to anyone else...

-

2 hours ago, KiLLeRRaT said:

Hi All,

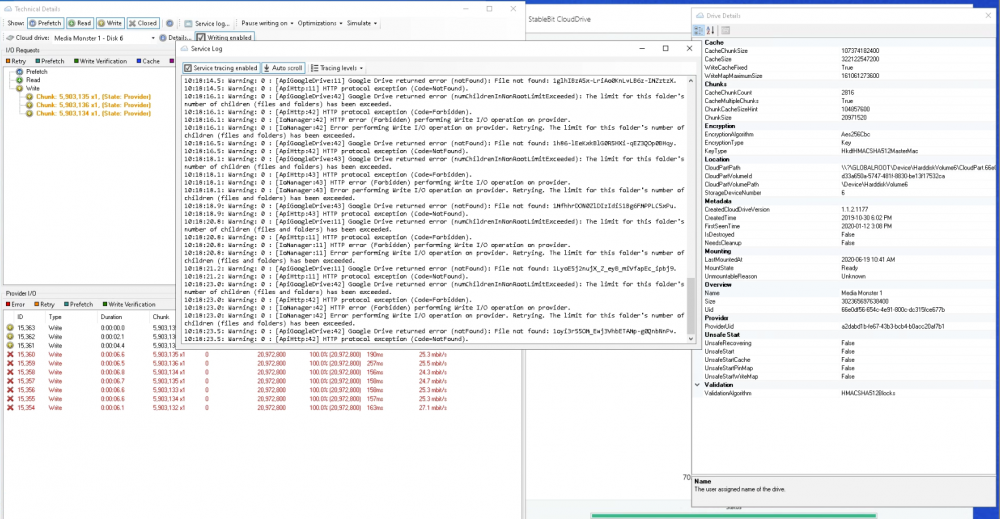

I've recently noticed that my drive has 40GB to be uploaded, and saw there are errors in the top left. Clicking that showed

What's the deal here, and is this going to be an ongoing issue with growing drives?

My drive is a 15TB drive, with 6TB free (so only using 9TB).

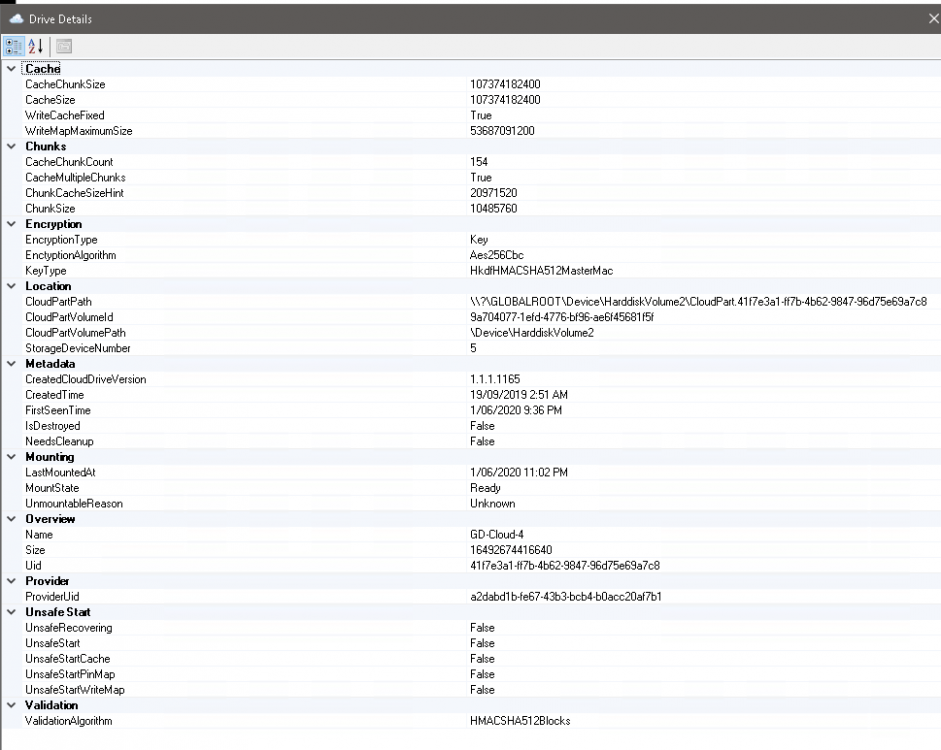

Attached is my drive's info screen.

EDIT: Cloud Drive Version: 1.1.5.1249 on Windows Server 2016.

Cheers,

I just started getting the same thing today. Based on the coincidence of both of us having the issue in the same day, this may be a Google issue.

-

-

2 hours ago, Historybuff said:

HI Srcrist.

Could you help me locate the original post where getting your own google api key is located?

I think i did this about a year ago but wanted to check.

the provider setting should have changed away from default but want to make sure.

Hi @Historybuff,

The wiki is at the following link. http://wiki.covecube.com/StableBit_CloudDrive_Q7941147

I did this a while ago, so I don't remember if there are extra steps, but it's all free and works great. Once you change the key in the json file, you just need to re-authorize the clouddrive you want to use that key.

-

On 8/17/2019 at 12:44 PM, steffenmand said:

Maybe this could be a slow process taking maybe maximum of 100 GB per day or something (maybe a limit you set yourself, where you showcase it would take ~X amount days to finish), with a progress overview. Having maybe 50 TB would be a huge pain to migrate manually, while i would be OK with just waiting months for it to finish properly - ofc. knowing the risk that data could be lost meanwhile as its not duplicated yet

Would also make it possible to decide to change to duplication later on instead of having to choose early on

with Google Drive it does limit the upload per day, so could be nicer to upload first and then duplicate later when you are not uploading anymore

with Google Drive it does limit the upload per day, so could be nicer to upload first and then duplicate later when you are not uploading anymore

Another good feature could also just be an overview of how much you uploaded/downloaded from a drive in a single day - could be great to monitor how close you are to the upload limit on google drive drives

Those are all awesome ideas!

-

I also just reverted to 1145. I'm sure Alex is working on it. Looks like a lot of features were added. I especially liked being able to duplicate pinned data only on existing pools... gives them a layer of protection while I re-upload the whole drive to a fully duplicated cloud drive.

I was having issues with uploading and downloading in 1165. I never noticed it when accessing the files, but there were always critical errors in the dashboard...

Also, my uploads were not stable for some reason, always jumping around in speed and never stable at 70Mbps... 1145 it is until the team addresses some of the issues.

Thanks @Christopher (Drashna) for the tip on reverting to 1145!!

GSuite RIP, No More Unlimited

in Providers

Posted

Another question is, does anyone have another provider comparable or better to gdrive? In terms of costs and quotas?