Thronic

Members-

Posts

67 -

Joined

-

Last visited

-

Days Won

1

Thronic last won the day on September 8 2018

Thronic had the most liked content!

Recent Profile Visitors

27234 profile views

Thronic's Achievements

Advanced Member (3/3)

4

Reputation

-

Thronic reacted to an answer to a question:

Do you duplicate everything?

Thronic reacted to an answer to a question:

Do you duplicate everything?

-

Thx for taking the time to reply Chris. All drives were in perfect SMART condition and zero passed to trigger any potential issues before using. I used the term e-waste loosely because it's what many of my colleagues say for drives that size lately... I do recovery and cloning professionally, so I have access to many used - but not damaged, low capacity drives. This gives me also the special benefit of actively create mechanical damage while spinning, so I can see how the systems react to true SMART problems. Thanks for mentioning your own preferences. There are of course things (tuneables) I would need to study more if using GNU/Linux based software RAIDs, but I've used DrivePool and Scanner so many years that I just trust certain things by now. E.g. how the scanner not only is a very adamant monitoring tool, but also how it will tell you exactly what files were affected upon a pending sector that's not recoverable. This means the difference of replacing all drive data vs a single file. You're absolutely right, simplicity goes a very long way. Still a big fan of StableBit, even if I consider other waters once in a while.

-

Thronic started following Plex buffers when nzbget is downloading to pool , BSOD From covefs.sys , Do you duplicate everything? and 7 others

-

PROCESS_NAME: Duplicati.Serv Was probably doing some reading and/or writing on your pool, and by extension, covefs.sys operations. c0000005 is covefs.sys+0x1ad90 doing modifications to memory that's either protected or doesn't exist. Maybe Duplicati is one of them low level doing-special-ntfs-tricks things. I might do a memtest while waiting for official answer.

-

Shane reacted to an answer to a question:

Do you duplicate everything?

Shane reacted to an answer to a question:

Do you duplicate everything?

-

Thx for replying, Shane. Having played around with unraid the last couple of weeks, I like the zfs raidz1 performance when using pools instead of the default array. Using 3x 1TB e-waste drives laying around, I get ~20-30MB/s writing speed on the default unraid array with single parity. This increases to ~60MB/s with turbo write/reconstruct write. It all tanks down to ~5MB/s if I do a parity check at the same time - in contrast with people on /r/DataHoarder claiming it will have no effect. I'm not sure if the flexibility is worth the performance hit for me, and I don't wanna use cache/mover to make up for it. I want storage I can write directly to in real time. Using a raidz1 vdev of the same drives, also in unraid, I get a consistent ~112 MB/s writing speed - even when running a scrub operation at the same time. I then tried raidz2 with the same drives, just to have done it - same speedy result, which kinda impressed me quite a bit. This is over SMB from a Windows 11 client, without any tweaks, tuning settings or modifications. If I park the mindset of using drivepool duplication as a middle road of not needing backups (from just a - trying to save money - standpoint), parity becomes a lot more interesting - because then I'd want a backup anyway just because of the more inherent dangerous nature of striping and how all data relies on it. DDDDDDPP DDDDDDPP D=data, P=parity (raidz2) Would just cost 25% storage and have backup, with even backup having parity. Critical things also in 3rd party provider cloud somewhere. The backup could be located anywhere, be incremental, etc. Comparing it with just having a single duplicated DrivePool without backups it becomes a 25% cost if you consider row1=main files, row2=duplicates. Kinda worth it perhaps, but also more complex. If I stop viewing duplication in DrivePool as a middle road for not having backups at all and convince myself I need complete backups as well, the above is rather enticing - if wanting to keep some kind of uptime redundancy. Even if I need to plan multiple drives, sizes etc. On the other hand, with zfs, all drives would be very active all the time - haven't quite made my mind up about that yet.

-

Thronic reacted to an answer to a question:

Do you duplicate everything?

Thronic reacted to an answer to a question:

Do you duplicate everything?

-

In other words, do you use file duplication on entire pools? And if so, what makes the 50% capacity cost worth it to you? I'm just procrastinating a little.. I want some redundancy but parity doesn't exist (I have no interest in the manual labor and handholding snapraid requires and Storage Spaces is not practical). So basically if I want parity I'd split the server role into another machine (I'm not interested in a virtualized setup) and dedicate the case holding drives to something like unraid as dedicated NAS - nothing else. Unraid also having the benefit of only loosing data on damaged drives, if parity should fail. Snapraid and Unraid, as far as I know, are the only parity solutions that has that advantage. This matters to me as I can't really afford a complete backup set of drives of everything I will store - at least not if already doing mirror/parity - so I'm floating back to thinking about simple pool duplication as a somewhat decent and comfortable middle road against loss (most important things are backed up in other ways). I would counter some of the loss by using high level HEVC/H265 compression on media via automation tools I've already created. And perhaps in the future head towards AV1 when it's better supported.

-

My own goto for copying out damaged drives (pending sectors) is ddrescue, a GNU/Linux tool available on pretty much any live distro. ddrescue -r3 -n -N -v /dev/sda /dev/sdb logfile.log -f https://www.gnu.org/software/ddrescue/manual/ddrescue_manual.html#Invoking-ddrescue It has a higher than normal percent chance of retrieving data on bad sectors than a regular imaging tool. And it's nice to have visual statistics of exactly how much was rescued. All you need is a drive equal or larger in size to image to, as it's a sector-by-sector based rescue attempt. If there are no pending sectors and just reallocations, you should be able to copy files manually using any file explorer or copy method, really.

-

Thronic reacted to an answer to a question:

How to idle drives

Thronic reacted to an answer to a question:

How to idle drives

-

Thronic reacted to an answer to a question:

Did StableBit go out of business? (I hope not)

Thronic reacted to an answer to a question:

Did StableBit go out of business? (I hope not)

-

Can someone make a complete summary of all settings needed to make e.g. Windows Server 2025 allow inactive drives to spin down after N time? I've always allowed my drives to spin, but as I get more and more I'm beginning to think maybe I can allow spin downs to save some power over time. Server is in a storage room that will always be between 15/30c winter/summer. I'll be using latest stable DrivePool and Scanner.

-

A lot of open-ended threads as well - some pretty serious. Maybe they're getting a bit overwhelmed, they're only 2 after all. AFAIK.

-

Thronic reacted to an answer to a question:

Beware of DrivePool corruption / data leakage / file deletion / performance degradation scenarios Windows 10/11

Thronic reacted to an answer to a question:

Beware of DrivePool corruption / data leakage / file deletion / performance degradation scenarios Windows 10/11

-

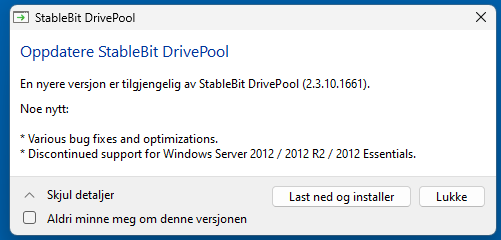

Thronic reacted to an answer to a question:

2012R2 no longer supported.

Thronic reacted to an answer to a question:

2012R2 no longer supported.

-

-

Guess that's that then, pretty much. A compromise made for having every drive intact with its own NTFS volume, emulating NTFS on top of NTFS. I keep thinking I'd prefer my pool to be on block level between hardware layer and file system. We'd loose the ability to access drives individually via direct NTFS mounting outside the pool (which I guess is important to the developer and at least initial users), but it would have been a true NTFS on top of drives, formatted normally and with full integrity (whatever optional behavior NTFS is actually using). Any developer could then lean on experience they make on any real NTFS, and get the same functionality here. If not using striping across drives, virtual drive could easily place entire file byte arrays on individual drives without splitting. Drives would then still not have to be reliant on eachother to recover data from specific drives like typical raids, one could read via the virtual drive whatever is on them by checking whatever proprietary byte array journal data one designs to attach to each file on block level. I'd personally prefer like something like that, at least from a glance when just thinking about it. I'm pretty much in the @JC_RFC camp on this. Thanks all for making updates on this.

-

Thanks @MitchC Maybe they could passthrough the underlying FileID, change/unchanged attributes from the drives where the files actually are - they are on a real NTFS volume after all. Trickier with duplicated files though... Just a thought, not gonna pretend I have any solutions to this, but it has certainly caught my attention going forwards. Does using CloudDrive on top of DrivePool have any of these issues? Or does that indeed act as a true NTFS volume?

-

Thronic reacted to an answer to a question:

Beware of DrivePool corruption / data leakage / file deletion / performance degradation scenarios Windows 10/11

Thronic reacted to an answer to a question:

Beware of DrivePool corruption / data leakage / file deletion / performance degradation scenarios Windows 10/11

-

Shane reacted to an answer to a question:

Beware of DrivePool corruption / data leakage / file deletion / performance degradation scenarios Windows 10/11

Shane reacted to an answer to a question:

Beware of DrivePool corruption / data leakage / file deletion / performance degradation scenarios Windows 10/11

-

As I interpreted it, the first is largely caused due to second. Interesting. I won't consider that critical, for me, as long as it creates a gentle userspace error and won't cause covefs to bsod. That's kind of my point. Hoping and projecting what should be done, doesn't help anyone or anything. Correctly emulating a physical volume with exact NTFS behavior, would. I strongly want to add I mean no attitude or any kind of condescension here, but don't want to use unclear words either - just aware how it may come across online. As a programmer working with win32 api for a few years (though never virtual drive emulation) I can appreciate how big of a change it can be to change now. I assume DrivePool was originally meant only for reading and writing media files, and when a project has gotten as far as this has, I can respect that it's a major undertaking - in addition to mapping strict NTFS proprietary behavior in the first place - to get to a perfect emulation. It's just a particular hard case of figuring out which hardware is bugging out. I never overclock/volt in BIOS - I'm very aware of its pitfalls and also that some MB may do so by default - it's checked. If it was a kernel space driver problem I'd be getting bsod and minidumps, always. But as the hardware freezes and/or turns off... smells like hardware issue. RAM is perfect, so I'm suspecting MB or PSU. First I'll try to see if I can replicate it at will, at that point I'd be able to push in/outside the pool to see if DP matters at all. But this is my own problem... Sorry for mentioning it here. Thank you for taking the time to reply on a weekend day. It is what it is, I suppose.

-

Gonna wake this thread just because I think it may still be really important. Am I right in understanding that this entire thread mainly evolves around something that is probably only an issue when using software that monitors and takes action against files based on their FileID? Could it be that Windows apps are doing this? quote from Drashna in the XBOX thread: "The Windows apps (including XBOX apps) do some weird stuff that isn't supported on the pool.". It seems weird to me, why take the risk of not doing whatever NTFS would normally do, or is the exact behavior proprietary and not documented anywhere? I only have media files on my pool, has since 2014 without apparent issues. But this still worries me slightly; who's to say e.g. Plex won't suddenly start using FileID and expect consistency you get from real NTFS? Only issue I had once was with rclone complaining about time stamps, but I think that was read striping related. I have had server crashes lately when having heavy sonarr/nzbget activity. No memory dumps or system event logs happening, so it's hard to troubleshoot, especially when I can't seem to trigger it on demand easily. The entire server usually freezes up, no response on USB ports or RDP, but the machine often still spins its fans until I hard reset it. Only a single time has it died entirely. I suspect something hardware related, but these things keep gnawing on my mind... what if, covefs... I always thought it was exact NTFS emulation. I haven't researched DrivePool in recent years, but now I keep finding threads like this... Seems to me we may have to be careful about what we use the pool for and that it's dangerous conceptually to not follow strict NTFS behavior. Who's to say Windows won't one day start using FileID for some kind of attribute or journaling background maintenance, and cause mayhem on its own. If FileID is the path of least resistance in terms of monitoring and dealing with files, we can only expect more and more software and low level API using it. This is making me somewhat uneasy about continuing to use DrivePool.

-

Did you try turning read striping off?

-

Thronic reacted to an answer to a question:

Plex buffers when nzbget is downloading to pool

Thronic reacted to an answer to a question:

Plex buffers when nzbget is downloading to pool

-

Thanks so much for reminding me.. I had entirely forgotten about Bypass file system filters, I used to have that on and I remember now that it had a good effect. I don't think I had network IO boost on, but I'm turning that on as well, seems safe if it's just a read priority thing.

-

If I'm watching something on Plex, and e.g. Sonarr or Radarr has downloaded something, once it gets copied to the pool, I have strong buffering issues, even with just me watching. Anything I can turn off to make drivepooling a bit faster/more responsive? Running pretty much a default, and fresh, install now on Windows 11 Pro. Or are we in ssd cache territory?