cocksy_boy

Members-

Posts

34 -

Joined

-

Last visited

Profile Information

-

Gender

Not Telling

cocksy_boy's Achievements

-

cocksy_boy reacted to an answer to a question:

Drive Pool 2.3.10.1661_x64 on WHS2011 - Failing to start.

cocksy_boy reacted to an answer to a question:

Drive Pool 2.3.10.1661_x64 on WHS2011 - Failing to start.

-

gtaus reacted to an answer to a question:

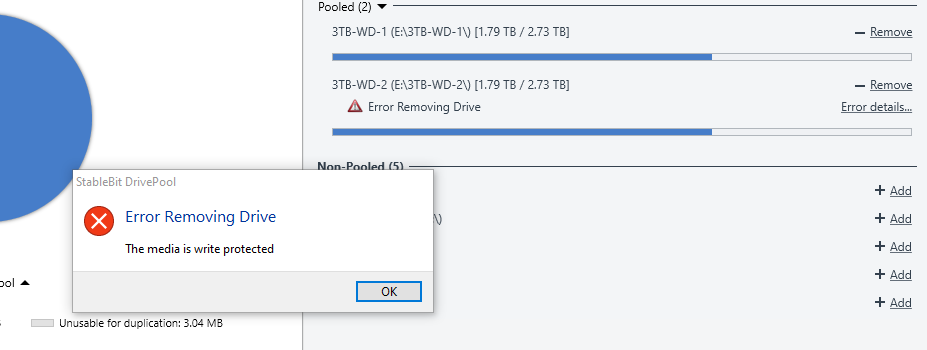

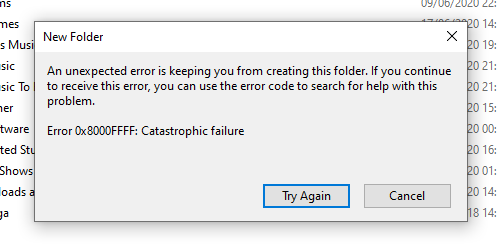

Cannot write to pool "Catastrophic Failure (Error 0x8000FFFF)", cannot remove disk from pool "The Media is Write Protected"

gtaus reacted to an answer to a question:

Cannot write to pool "Catastrophic Failure (Error 0x8000FFFF)", cannot remove disk from pool "The Media is Write Protected"

-

Shane reacted to an answer to a question:

Cannot write to pool "Catastrophic Failure (Error 0x8000FFFF)", cannot remove disk from pool "The Media is Write Protected"

Shane reacted to an answer to a question:

Cannot write to pool "Catastrophic Failure (Error 0x8000FFFF)", cannot remove disk from pool "The Media is Write Protected"

-

cocksy_boy reacted to an answer to a question:

Forced out of FlexRaid Transparent raid. Coming to Drivepool + Snapraid, need some infos.

cocksy_boy reacted to an answer to a question:

Forced out of FlexRaid Transparent raid. Coming to Drivepool + Snapraid, need some infos.

-

cocksy_boy reacted to an answer to a question:

Forced out of FlexRaid Transparent raid. Coming to Drivepool + Snapraid, need some infos.

cocksy_boy reacted to an answer to a question:

Forced out of FlexRaid Transparent raid. Coming to Drivepool + Snapraid, need some infos.

-

Forced out of FlexRaid Transparent raid. Coming to Drivepool + Snapraid, need some infos.

cocksy_boy replied to astuni's question in General

I'm no expert, but I don't think you need / want to stop the drivepool service when copying / moving all the files to the hidden folder on each drive (as you've written in step 2). I would have thought you set you settings for duplication, balancing, etc, the move the folders with the service running. But i may be wrong....! I have a couple of simple duplicating pools and I'm thinking of adding snapraid as well, but I'm not 100% convinced yet... I'd much rather have an integrated system, but I don't think there is one. -

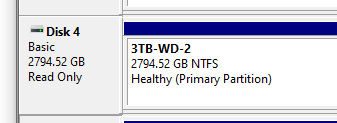

Hi All, I had a really odd error that has left one of my pools not working correctly. I shit my system down as per normal and when i bought it back up, Drivepool sent me an error saying a disk was missing. The disk appeared in Disk Management but was not initialised. So I shut down and checked the connections, and rebooted a couple of times and the drive came back up, Drivepool found it and subsumed it back into the pool. However, whenever I try and write to the pool, or the individual drive that disappeared and came back, I get the windows error: Catastrophic Failure (Error 0x8000FFFF). Googling this wasn't much help, so I thought I'd remove it from the pool and re-add it, which is when DrivePool came up with the error: "The Media is Write Protected". The other drive in the pool is fine, but I can't do anything in the pool or the other drive. Any ideas how to resolve this?!

-

Is there a way to conduct a File System Health scan / check only?

cocksy_boy replied to cocksy_boy's question in General

perfect - thank you!- 3 replies

-

- file system damaged

- file system

-

(and 1 more)

Tagged with:

-

cocksy_boy reacted to an answer to a question:

Is there a way to conduct a File System Health scan / check only?

cocksy_boy reacted to an answer to a question:

Is there a way to conduct a File System Health scan / check only?

-

Is there a way to conduct a File System Health scan / check only?

cocksy_boy posted a question in General

As per the title, I did a scan and it came up with a file system damaged error on one of my disks. After a lot of work with chkdsk, I think I've fixed it (or at least chkdsk reports no errors any more), so I'd like to run a file system scan within scanner, but not do a full surface / sector scan if possible (to save time). Is this possible at all, or is the only option just to do the whole lot? TIA.- 3 replies

-

- file system damaged

- file system

-

(and 1 more)

Tagged with:

-

@Christopher (Drashna) the crashed interface was in the stand alone UI. When it happened the second time, I looked in the WHS dashboard and it seemed to be working fine there, but the main Scanner stand alone UI / app had frozen.

-

Hi @Christopher (Drashna), yeah its version 2.5.6.3336. I've done it and added the hyperlink to this thread as the request link / ID.

-

Odly, the UI on the stand alone app has crashed again in WHS 2011. However, if I go to the WHS dashboard, the UI screen is duplicated in one of the tabs, and it works correctly there, with no issues at all. I wonder if there is some kind of issue with the WHS "server" dashboard, and the main UI for the app?

-

yeah - that's exactly how mine was. After a reboot, it seems absolutely fine - I didn't kill the scanner dashboard in task manager, I just rebooted the WHS.

-

yeah - i ended up rebooting and it all seemed fine afterwards - it showed that all the discs had been scanned, so not sure what caused the GUI to crash.

-

Hi All, I installed Scanner to check my drives on my WHS last night and set it off when i went to bed. This morning I RDP'd in and its completed a couple of drives and has a few more to go, however the UI appears to have completely frozen / crashed. The Server is still functioning OK, but none of the buttons / interface / scanner screen seems to do anything, and I can't minimise / maximise / close the window. Looking at Task Manager, it says the scanner is "running" and not crashed / not responding. Looking at resource monitor, Scanner.Service.exe is doing lots of disk reads so appears to be going OK, but I can't do anything in the interface / window at all. Any ideas? It's probably worth mentioning that WHS is running in a VM on ESXi, and some of the drives are passed though and some are virtual drives. Some of the drives scanned are virtual (so pointless to scan), and some are passed through. The reason I want to use the interface and stop the scan is to stop scanning the virtual drives, and tell it to only scan the actual physical drives that are passed through!

-

Scanner and DrivePool on W10 Server not logged in

cocksy_boy replied to cocksy_boy's question in General

thank you - that's really useful to know! -

Scanner and DrivePool on W10 Server not logged in

cocksy_boy replied to cocksy_boy's question in General

thanks! -

Scanner and DrivePool on W10 Server not logged in

cocksy_boy replied to cocksy_boy's question in General

Thanks @Spider99. for the second point, I presume I'd need an additional sets of licences for each program on the "client" to check the "server" remotely? -

Hi All, I have DP installed on my WHS2011 home server machine, but am planning to migrate my server to W10 instead. I currently don't run Scanner, but am thinking about adding it to my W10 system after migration. My question is: I plan to use W10 as a server without it being logged in (as I understand that is better from a security perspective), but want to check if both scanner and DP will load and work on boot, before a user is logged in? And then secondly, is there any way to remotely access any kind of status information for either DP or scanner, without having to remote desktop into the W10 server? Thanks in advance!