irepressed

-

Posts

19 -

Joined

-

Last visited

Posts posted by irepressed

-

-

2 minutes ago, Christopher (Drashna) said:

In your case, it may be worth setting the cache to a fixed size, so that it should never use more than the size specified. This may help out here.

Already did, since it was first suggested, actually. It did not make any difference whatsoever.

Nothing to upload anymore for now, nothing uploaded either in the past week, all data had already been uploaded to the cloud, and something keeps growing at an approximate rate of 4 or 5GB/hour. This is a huge problem.

I really don't know what to do anymore other than uninstall the whole Stablebit package. Don't know if it's related to the amount of drives (5) or the total uploaded data size (10TB), but it's no use to me if I need to restart my PC every two days. My guess is that even with a dedicated HDD for cache, I would only delay the issue by having more space to fill. I don't see why it would stop filling my drives after a certain amount.

One last issue with this, I noticed in the past few days that my RAM had 11GB out of 16GB that was daily used, something that I never saw in my life before. Yesterday evening, I decided to stop all Stablebit services and set them to Manual, and I restarted my PC. I've been averaging a 4GB of total RAM usage since this last reboot with services off. Stablebit seems to not only eat all my disk space, but my ram as well. 7GB of ram for something so light, it's become too much of a resource hog and cannot use it as it is.

At this point, it's getting too technical for me and I believe that I need to forfeit. If at least we could understand what is causing this.

-

I am sort of changing the subject here, but while we figure this out, is there a way to change the cache location on already existing drives? I would try to install another physical drive and put all the caches on it.

-

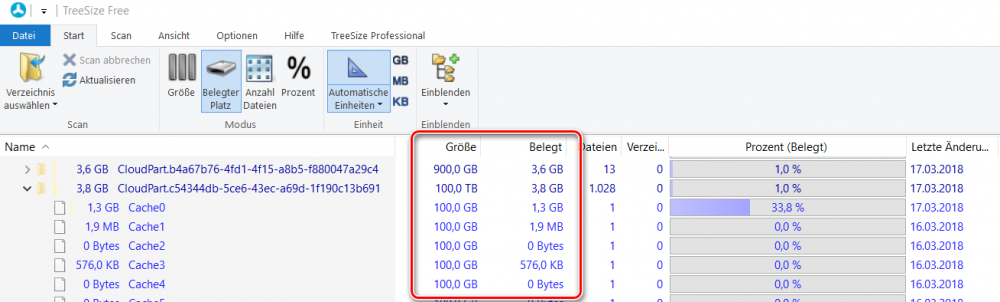

I also did another scan of TreeSize, made sure I was as admin, with all folders visible.

Got the exact same thing (except some mb here and there) before the reboot. Can't see it from that kind of utilities.

As a side note, malware/antivirus scan came in negative, as expected.

-

2 hours ago, Christopher (Drashna) said:

Run this both before and after this happens:

fsutil fsinfo ntfsinfo C:My guess is that you have a very high "Reserved space" when this happens.

If so, there isn't much that we can do, as this is something controlled by Windows, and an issue that we've had in the past (despite the fact that we're not doing anything "weird" here)OK, I did launch the command while my C drive was down to 226GB (approx), and launched it again after a reboot, which brought my free space back to 296GB.

Here's a screenshot of the only two lines that changed. First two lines are with 226GB free, line 3 and 4 are with 296GB of free space. All the rest was identical.

-

2 hours ago, Viktor said:

As far as I understand, is a CloudDrive cache folder a placeholder for the entire filesystem in the cloud. It contains the total number of chunk files, each showing the same "virtual" size (e.g. 100 GB), but only a few are partially filled with cached data (real size). Only the real sizes count for the total disc space usage (see the attached TreeSize example). I have cache folders with more than 100 TB total size on a 500 GB SSD and never ran out of space so far.

I’m wondering, why TreeSize doesn’t give you a hint, where the space is actually taken. Did you run it as Administrator and check normally hidden places like the System Volume Information folder? Is the C drive free from errors? And can you exclude a malware effect?

That's why I'm saying that the "false" reported size is not my issue. It's already abnormal to fit 26TB on a 500Gb SSD, so I don't think it's related to my C drive getting full by itself.

TreeSize was run as admin, and I do see all hidden files and folders. I'll check with the Malware just to be safe, but I expect that all should be fine. Thanks anyways for the ideas.

-

1 hour ago, Viktor said:

If it’s not the cache folders, you should try to find out, which other folders and files are increasingly taking the disc space. I’d recommend using the freeware tool “TreeSize Free” to identify them.

One place other than the cache, which CloudDrive occasionally writes temporary files into, I know about, is the folder %PROGRAMFILES%\StableBit\CloudDrive\Data\ReadModifyWriteRecovery. But most of the time this folder should be empty.

That's actually a great idea.

So I did install TreeSize (and also Space Sniffer) and... drum roll... it's nothing! Hahaha! I just don't understand what's going on. I took a screenshot of SpaceSniffer while the CloudDrive services were started, with 140GB of space left, and then I took another screenshot a couple of seconds after turning the services off, with the free space back to 280GB. There were no difference whatsoever (well, nothing significant) between the two!

The thing that I don't get is that even with Stablebit services off, my C:\CLoudPart.* folders still say that used space is 26TB. And if we get back to the initial idea that CloudDrive simply reports false size usage, how come my C drive still sees its free space changing slowly, enough to make my programs crash and generate Windows error? Shouldn't 26TB on a 500GB SSD show 0 bytes left right at the start?My head is going to explode trying to understand this.

-

For CloudDrive to be reporting false usage and telling me that 24TB are used on my C drive, I couldn't care less, to be honest. I don't mind if there is no fix.

What I do care about, is CloudDrive filling my drive for real, while all other programs stop responding or crash completely because my C drive has 0 bytes left. That's a real issue.

As of now, the services have been restarted for a little more than 24h and I'm down to 160GB. We're talking a little more than 4GB per hour. I do not have any other drive that I can (or want to) dedicate to CloudDrive cache. With the fact that I am often on the road and outside my residence because of my work, there is no way that I will restart all StableBit services every 2 to 3 days so my family can use the computer. It simply does not make sense.

I sincerely hope that this is due to a bad configuration and that there is a workaround to this.

-

4 hours ago, red said:

Try setting cache type to fixed. I think they are using sparse files with the cache and it often shows that it's allocating a lot of space even though it's really actually free.

Check the cache folder by right clicking it and select properties. For me "size" is often something crazy, but "size on disk" (actual usage) is way smaller.

If cache folders are at the root of the C drive (maybe it was set there by me, can't remember), then the size are quite different, as you mentioned. 24TB, but 4GB as real size on disk. Unfortunately, this does not match what's really happening. I am thinking that something somewhere is also using data for CloudDrive, and it's not the 4 cache folders.

As of now, I am now down to 233GB of free space, coming from 282GB, and it keeps growing. I did set the cache to Fixed size of 1GB for each of my 5 drive, but something from CloudDrive keeps getting bigger by the hour.

Again, just to reiterate, I am not doing anything else on my PC that could change the free disk left. Most of my programs and services are not being used, I've been strickly using my work laptop the whole day.

-

Simply to add additional information to the initial problem, I decided to go ahead and remove all Plex links between libraries and Clouddrive, just so we can keep Plex out of the equation.

I restarted my services after doing this and getting my 280GB of free space back. Since then, I've been monitoring my PC. Again, no transfer has been done to or from CloudDrive since then. After restarting the service, I precisely had 282GB free on my C drive. About 4 hours later, I am now down to 260GB, while nothing is using CloudDrive. The cache continues to grow, even if not requested.

Also, the cache on my 5 drives are all set to 1.00GB expandable and according to CloudDrive, all using between 500mb to 2.5GB as local cache.

-

Hi,

Not sure exactly what's happening, but I am currently experiencing a very problematic issue with CloudDrive.

For the context, I have 5 mapped CloudDrive, averaging about 2TB each, used only for backup purposes. They are all connected to my G-Suite unlimited account. My cache drive is my C drive (500GB SSD), which without its cache usage, is a little less than half full, so about 280GB free for the CloudDrive cache.

For a reason that I do not understand, CloudDrive fills my C drive with cache, even when I am not transferring anything (no upload, but no download as well). Since a couple of days/weeks (sorry, hard to tell), I constantly get messages from Windows telling me that my C drive is full. Not near full, but really full, as in 0 bytes left. So I need to shutdown everything, restart the computer and voila, all data is now free again, 280GB of free HD space. I also tried to shutdown my Stablebit services, and as soon as I do, the free space is back.

I did see a topic on the forum about some sort of caching issue, but to be honest, it was a little too technical for me and I could not understand if it was the same issue or not. If this is related and has no possible resolution, then I have bought Stablebit for nothing since I cannot continue using a software that fills up my C drive on a daily basis. Hopefully, it's not.

The only culprit I can think of is that my Plex Server is currently connected to one of these CloudDrive, so scans might be happening on that data. That is the only usage I can think of. I will try to see if I can disable scans on that one specific library connected to my CloudDrive, and see if it happens again. Does this make sense? Could a scan of a large drive with tons of files be enough to jam a Cloudrive cache?

Currently using the latest CloudDrive build on a Win7 Enterprise PC.

Thanks for any help or suggestions.

-

Seems to be more stable for me as well with the latest build. I still get errors about drives unmounting, not too sure why it's happening, but the Not authorized errors have for now disappeared. Thanks.

-

Thanks for all these precisions, it helped a lot!

-

I will add a new clouddrive to host all my music files, ripped from my personal CD collection.

What would be the best settings for my drive to ensure the fastest transfer for small files (5 to 10mb) and also help in using this same drive for streaming my music from my Plex server.

I've seen recommandations for Linux ISOs or other big files, but I did not see any recommended settings for mp3s.

Sector sze, storage chunk size, chunk cache, what would you recommend?

One extra newbie question: does Clouddrive provide standard encrypton at a lower level, even if Full Drive encryption is not selected? I'm asking since I do not remember checking this option for other drives that I have, but if I go to my Google Drive account, I do see Stablebit folders with bits and pieces meaning nothing. Does this mean that I had checked Full drive encryption for my other drives?

Thanks for the help.

-

1 hour ago, Christopher (Drashna) said:

For those having this issues, what are your upload and download speeds?

If you're not comfortable replying here, then post in a ticket (especially if you've already opened one), or PM me.350mbps up and down. No throttling.

-

1 hour ago, wolfhammer said:

I've been on latest build this entire time and have issues.

Yeah, we're still waiting for some news about this issue. The DEVs are looking into this.

-

38 minutes ago, tcz06a said:

I am logging now and will create the case as soon as I see it happen again. I also notice that each time I reauthorize, the Stablebit CloudDrive app is incremented a number, ie:

Stablebit CloudDrive (9)Same here as for the incrementing numbers.

I have enabled web logging, but unable to reproduce so far. I've been monitoring for about an hour, waiting for it to happen again.

Thanks.

EDIT: happened again, just submitted my logs.

-

Glad to know I'm not the only one with this issue. Something must have changed in the way Google authenticates CloudDrive, I suppose CloudDrive team will need to make adjustments. Has anyone opened a support request so far? If not, I will do so today.

EDIT: support request # 4588663

-

Since a couple of days, I am getting constant "is not authorized with Google Drive" about my drives as soon as I try to upload to it.

My account is a G-Suite unlimited account.

I've been uploading about 50GB of data today and had to re-authorize all drives (I have 4) 2 to 3 times each. This is a real pain to deal with and I lose access to my drives again and again. There does not seem to be any logic to it, drive will fail to this error one after the other, with random time between getting this error on each drive. As a side note, I have been uploading only to one drive out of my 4 mapped drives.

Does anybody know what could be the issue?

Thanks.

Issue with cache or local data consumption

in General

Posted

thanks!8