vfsrecycle_kid

Members-

Posts

29 -

Joined

-

Last visited

-

Days Won

1

Everything posted by vfsrecycle_kid

-

Maybe not immediately, hence why I said "can it calculate" - I'm aware it wouldn't instantaneously know and re-measuring is necessary. - Losing a disk - can the existing drives hold all the data and retain replication property (pool was not full) - Losing a disk - how big of a drive do you need to replace it to retain replication property (pool was full) My thing is DP should be able to figure out both BEFORE duplicating simply fails from lack of disk space. Right now all you get is a percent of duplication progress and for rebuilds that take multi-day you don't want it to randomly fail at 32% and it takes days to notice (yes, some people actually use this on their servers and don't check it daily) Also regarding the math, I've edited my post. This proves I can't even trust myself and I'd much rather trust what DP tells me. Thanks for the correction. Email notifications (which I use and adore) augmented with such information would be fantastic.

-

Correct, in that case you don't know until the files are added to the pool and that is a different beast all together since you're talking in the realm of useable vs true disk space. However this is about resatisfying existing content replication rules. DP knows what was lost, how it needs to be replaced, and ultimately knows it needs X disk space to satisfy it. To further your point, I concede now that a "free space" counter does not make sense, however a "disk space needed for resatisfying replication" seems deterministic to me - in the case of a dead drive being replaced. A super simple example: 1. DrivePool - 4x4TB drives in global 2x replication. 8TB useable disk space, reported as 16TB to OS (no problems here) 2. DrivePool is 100% full meaning 16/16TB is being used (8TB of content replicated 2x) 3. DrivePool loses a drive due to hardware failure. No data loss, but replication rules are now unsatisfied 4. At this point, 3x4TB leaves 12TB of pooled space with approx 4TB* of unreplicated but not lost data With the above example, to me it seems fairly obvious and deterministic that DrivePool should be able to recommend please insert another drive with at least 4TB* in size Replace the above example with a myriad of replication rules, folders, etc, and the math should still check out - and maybe DP could recommend that to end user? Leading us back to my initial topic, which if its easily demonstrated how much data is needed to rebalance properly, you can also say how much data space will be left post balance.....as in, if a 4TB* drive is needed, but you insert a 8TB, you already know you'll have 4TB free in the end.

-

That's why it's so complex, it is up to what the replication rules for the lost-data was set to. For example, the balancing finished and I was left with 1TB free (and with my replication rules in place that realistically means 0.5TB useable). I guess the ultimate question is if there's a way to calculate estimated actual disk space free.

-

Hello, Hopefully this question makes sense. I have a big DrivePool and one of my 8TB drives just died. While waiting for my Ironwolf 16TB to replace it, re-balancing across the other drives has begun. However, it will be a multi-day rebuild, and drive space will definitely be tight - leading to my questions: 1. Is DrivePool smart enough to know if there's enough space to rebuild with my replication rules (2X globally, 3X for special folders) or not? 2. Does DrivePool know how much free space will be available once rebuilding is finished? 3. Is that presented to the user somehow? I see metrics like "Free Space" and "Unduplicated" and while Free Space > Unduplicated, I assume my duplication factor will determine whether there's enough space or not. Cheers

-

My father when trying to access our pool encountered similar issues like this before. At the time, a restart fixed the problem and we all had permission to access the pool again (read was fine, writes weren't allowed). From there we just considered it a fluke and updated to the latest beta build. Havne't encountered it since.

-

Suggestions to avoid accidental deletes?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

That sounds fantastic. And just to confirm - I can do this sort of functionality without relying on their Cloud? (That is to say, their free plan)? -

Suggestions to avoid accidental deletes?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

I definitely agree with you both - that I should employ some sort of cloud solution - to at least get rid of the 'single point of catastrophic failure' (and quite honestly, had slipped my mind, I'll look into this later in the week, Amazon has a $60/yr "Unlimited" Photos/Videos storage plan - but I'll dig deeper) However, that alone won't really address my issue - as you mentioned by yourself "as long as you noticed before the deletion goes out of rotation". I can't necessarily trust that we'll happen to find out the file was missing. Things could disappear and we wouldn't even know they are gone. For all I know some cloud providers (I know Dropbox keeps a detailed log of deleted files and sometimes offers the ability to recover deleted files....but..... I think in my case I really want a robust, highly documented/customizable solution. (Example: On Dropbox you can't say "keep deleted files for X period" - but can you on Amazon? I'm not sure, I'll have to check) Shane makes a fantastic point, that most likely something with explicit versioning might help me out with this. I've looked at Syncthing in the past (mostly because I helped setup my neighbors on a Syncthing cluster) as well as Crashplan. The former doesn't have email notifications however. But it seems Syncthing offers an interesting thing: Versioning: (See https://docs.syncthing.net/users/versioning.html- Most notably the Simple File Versioning mechanism) - Namely I can keep X copies of deleted files (in my case: probably 1 will suffice)Granted I know close to nothing about Crashplan so I will look into it. I'm a little biased to the opensource syncthing as it has treated my neighbors well (they have a 3machine 2laptop cluster that keeps their ~50GB of essential data safe).. What I think I will do is a test run of Simple File Versioning on my neighbors machine to see how this versioning mechanism works. It would be minimal effort for me to code a small script that sends out an email whenever files are moved to Syncthing's "versioning" folder (as mentioned above in the Syncthing docs, a ".stversions" folder is created) This way, I don't have to worry about the problem of 'noticing deleted files before they are rotated' - with Syncthing I can simply set it to 'move deleted files to .stversions, and NEVER remove it from there unless manually removed) And of course there is the added benefit of having a node in the cluster running outside of the house - in the case of fire. --- My mind is all over the place, but I am not necessarily in a hurry to implement all things. Thank you for the great ideas so far, especially since they never occurred to me.... -

Hi folks, Got a question I figure maybe some people in here have thought about. So there's the age old debate of duplication vs. replication - and I get it. I've got family photos in a DrivePool with 3x Global Duplication (around 1TB total). Now this is all replicated in the pool. While this protects against hard drive failures, this does not protect against mistakes. My father could delete the Photo directory and it's effectively game over (yes there are undelete tools but lets ignore that for the sake of the argument). What I am wondering is if people have any tried-and-tested minimal effort solutions to this kind of problem vector? My initial ideas: Remove deletion privileges from the main user used to access the NAS Destructive actions can only be performed via a special "Deletion" designed Windows user, with a different login and password Alternatively: Create a second DrivePool for data I want to designate as "mistake proof" Use some form of incremental/differential/<something else> backup tool to routinely (every week?) mirror from the original DrivePool to the secondary pool. The idea being that, if files are ever deleted on Pool A, their "ghost" will always live on Pool B (aka Pool B should be forever growing, never decreasing) Or: Some sort hybrid solution between 1 and 2? The second solution assumes the data rarely changes - which in the case of my family photos. Now I'm not saying any of my solutions are right, I am still very much in the brainstorming process. I want my family to have confidence that I can keep their data safe (DrivePool is around 40TB big now, but for this post, only 1TB applies to my 'problem') - and that effectively means that I need to protect them.....from themselves. And who knows, maybe I'll write the wrong command in the CLI one day and accidentally nuke everything... Thanks folks!

-

DrivePool + Scanner - Questions about data evacuation (unreadable sectors)

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Thanks for the clarification. Just didn't want to make a mistake. My DrivePool (minus the one drive) is now balancing. Around 40% done so I will let that finish overnight. I'm running chkdsk /B on the potentially problematic drive now, that will also run overnight. Could very well be possible there's nothing wrong with the drive and was simply some weird state the OS was in. Fingers crossed. I've got chkdsk /B running on the drive inside another machine. Edit. -

You'll want to open diskmgmt.msc and from there right click the drives in an order that does not produce conflicts. See: http://wiki.covecube.com/StableBit_DrivePool_Q6811286 If you don't want WXYZ to be seen at all, then you do not need to give them drive letters (DrivePool will still be able to pool them together) - Instead of clicking "Change" simply click "Remove" when dealing with the drive letters. Probably the easiest order to do this if you want to keep every drive with drive letters: Change F to D Change E, G, H, I to W, X, Y, Z Change J to E (now that E has been freed up) Probably the easiest order to do this if you want the pooled drives to have no drive letter: Change F to D Remove Drive Letters E, G, H, I (see my picture for the Remove Button) Change J to E -- You should be able to keep DrivePool running during this whole transition phase (you don't need to remove drives from the pools). Personally I'd go with Option 1. While there are Folder Mounts that you could use, I think it would just be easiest to keep everything easily accessible the "normal" way. Plus without Drive Letters you won't be able to add non-pooled content to the pooled drives (just incase you wanted to do that) Hope it helps

-

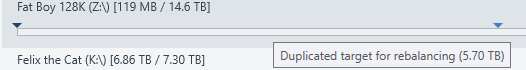

Hi folks, I've got a drive in a pool that has un-readable sectors (GLOBAL 2x DUPLICATION) As of right now, it seems Scanner is reporting 4838830 unreadable sectors (2.31GB) after finishing its scan. I have the option to start a File scan, but I have not started it yet. --- Meanwhile, on the DrivePool side: The affected disk has been limited: There is a red arrow offering: "New file placement limit: 0.00GB" There is a blue arrow offering: "Duplicated target for re-balancing (-2.04TB)" A few other drives in the pool have a blue arrow "Duplicated target for rebalancing (XXGB)" 2.2.0.744 BETA x64 Win10 I'm a little confused as to the order I should be doing things here... DrivePool reports "Duplicating..." and then returns an error related to 2 files, giving me the option to Duplicate. This process seems to repeat. Looking at this thread: http://community.covecube.com/index.php?/topic/829-pool-going-to-hell/ I'm still a little confused as to the order of operations: 1. Drive has sectors unreadable 2. DrivePool has a new 0.00GB file placement limit 3. DrivePool attempting to evacuate data (is this done in my case? How do I interpret that negative number?) 4. Run file scan to "repair data" 5. Pull out failing drive 6. RMA failing drive Basically the heart of my question is: what do I do now? And when is it safe to pull out the drive and begin RMA? Thanks edit: I clicked file scan and it just says the MBR is damaged. Regardless of the issue (I suppose I can see if this is a real drive failure or something a reboot will fix) - has my data been evacuated? edit1.5: Running chkdsk complained about the disk being RAW - rebooting the machine fixed that issue - so I wonder if this just a false positive or some weird state everything was in. edit2 (newest): If I can trust that my 2x Duplication works, is it fair to assume that I can simply pull out the drive, let DrivePool re-balance everything with the remaining drives in the pool (there is enough space) - implying that I am temporarily in a state of no duplication for all the files on the drive I just pulled out? If that is the case, I'm fine with just formatting the pulled-out drive, and investigating further if I need to RMA it - or just throw it back in the pool as a fresh drive.

-

Lingering files in PoolPart after a successful Removal from Pool?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Sounds good. Thanks for the background info. Successfully pulled out and formatted the two removed drives, and replaced them with 2 new 8TBs. No issues to report. Thanks again for the great product. Made a potentially scary problem very easy to handle. -

Lingering files in PoolPart after a successful Removal from Pool?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Hello, Thanks for the feedback. You are correct, while the first drive was mid-removal (lets say 1% or something), I queued up the second drive to be removed. I was not actively removing anything from the pool, but that is not to say something automated/service-like was accessing something on the system. And also to clarify: The lingering 400GB on both removed drives were equivalent, and match CRC on the pool post-removal. My initial issue was I didn't want to delete the PoolPart folders on the 2 removed drives unless I was 100% sure they were still persisted within the pool. After my own check it is clear that everything is good, and I can clean up the two newly removed drives. I'm currently out of the country and since removals are rare, I'm not going to attempt to upgrade to the beta version remotely. So hopefully all is well. Thanks for the insight. -

Lingering files in PoolPart after a successful Removal from Pool?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Version: 2.1.1.561 Drives in Pool: 4x8TB 2x6TB (these are the ones that were removed) Options: No forced removal No duplicate data later (Aka, both unchecked) The second drive to be removed was "queued for removal" in DrivePool See attached logfiles. Removal started around Sep5. I have just finished my checksum and everything outside of the pool on those 2 drives matches the 400GB of data inside of the pool (CRC). -

Lingering files in PoolPart after a successful Removal from Pool?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Not quite sure if this is the same issue I am encountering, but is useful to know so it is appreciated. I have yet to confirm if any files are corrupt on either the pool end or either of the removed drives. Currently I'm manually running a checksum comparison between Removed Drives and the Pool for any missing stuff. Should be done in around 18 hours. I'm basically using Teracopy with the Verify Function ONLY (No move, no copy) to compare Source (Removed Drive 1 + Removed Drive 2) == Target (Drive Pool). However a new interesting discovery, both removed drives have the SAME ~400GB in them, is this a telling sign? -

Lingering files in PoolPart after a successful Removal from Pool?

vfsrecycle_kid posted a question in General

Hi folks, I have a 40TB NAS and due to some interest SMART results on 2 drives, I've taken the precaution to remove them formally through DrivePool, and pass the data off to the other drives in the pool. (2x Global Duplication, 3x Duplication for "My Photos") The first drive of the 2 has finished the Removal Process, but it seems that it has left maybe ~20 folders (with a bunch of files) in the now unhidden PoolPart folder. I performed a simple SHA1 check on 1 of the files and compared it to the version that is still in the pool and it is an exact match. So I'm curious as to why it is seemingly random that a few files weren't removed from the removed drive. The PoolPart folder on the removed drive is around 400GB, whereas it was originally 4TB. Any insight as to what happened? I don't want to delete this folder unless I'm certain that this 400GB of content exists in the pool still, but not sure how to automatically compare. Thanks! edit: Just a thought, could it be the files were somehow in use so DrivePool merely copied to another drive them instead of moving them? edit2: Same thing with the 2nd removed drive. Once removal completed around 400GB of the original 4TB remains on the drive. The contents appear to be in the pool as expected, as well. -

Change allocation unit size of all drives in DrivePool: Best way?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Thanks for your input Christopher, I'll be sure to utilize that balancer and have it move all files from that drive, then pull it out of the pool and format, then add it back. I figured it'd have to be something like that but your idea sounds like the right approach. My main concern is I wanted to ensure that while I was doing all of this moving and formatting, I always had my 2x and 3x duplication intact (aka I wasn't manipulating my drives in a "degraded" state with a single instance of a file in the case of 2x, or 2x in the case of 3x). I'll most likely do that, and as you said it'll take time so I'll have to find an optimal time to do it because I'd like to monitor it while I'm doing it all. Thanks! -

Change allocation unit size of all drives in DrivePool: Best way?

vfsrecycle_kid posted a question in General

Hi folks, I've got 4x8TB and 2x6TB drives in a 6 drive DrivePool with 2x duplication on all files, and 3x duplication for a specific folder. Attached is a photo of current drive stats: I formatted these drives with 4098 byte unit allocation size. I want to change all 6 drives to 64KB. I'm looking to find out the best way to perform this change on all 6 drives with minimal risk. Basically looking for a recommended set of exact procedure. In my head I'd be doing this: Remove a drive from drivepool Wait for the 2x/3x stuff to propagate to the remaining drives Format drive with new allocation size Add back to DrivePool (Presumably it won't be moving any data back to this drive) Repeat for the remaining drives However there must be an easier way. Is there a way to change it without formatting? Without removing it from the DrivePool? I'm sure there's 20 ways to get this done, hopefully I can get some staff or user input on the issue. I'm tech savvy but never dealt with a situation like this before. Not to mention the added complexity of the drives being in a pool. Thanks! -

DrivePool - Recycle Bin - Undelete Functionality (Grayhole Trash Equivalent)?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

I'll have to give it a shot anyways haha. There's some nice abstractions people offer, wonder if they'll offer non-commercial single use license as a gesture of good will, haha! -

DrivePool - Recycle Bin - Undelete Functionality (Grayhole Trash Equivalent)?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Interesting. I didn't know about this. I'll look into how easy it is to write a File System Filter driver. Seems there's some tutorials out there with some bootstrapped code all ready to use. -

Regarding 2. I believe in conjunction with the StableBit Scanner, there's a DrivePool balancer that moves files to another drive when it starts to detect SMART errors. http://i.imgur.com/A2GMxNZ.jpg (found screenshot off google, most likely old version)

-

DrivePool - Recycle Bin - Undelete Functionality (Grayhole Trash Equivalent)?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Definitely considered this one already. Originally thought I could disable DELETE but keep MOVE (that way you'd just "Move" to the 'Recycle Bin' and I'd have a script clean up the folder). But obviously I quickly found out a move is simply a NTFS Copy+Delete. So had to nix that. DrivePool + simply telling our users to manually move when they want to delete is probably going to be the solution. Sure we might have some accidental times where they'll be a delete. But hopefully the drives won't die during that period! When you say a file system filter (I've been in talks with CBFS to get a single use license), do you mean a system similar to your balancer plugins, so something exposed for someone like me to sneak in a "delete override" plugin type functionality? Interesting stuff! Thanks -

DrivePool - Recycle Bin - Undelete Functionality (Grayhole Trash Equivalent)?

vfsrecycle_kid replied to vfsrecycle_kid's question in General

Just noticed. Thanks for the update. Alex is not wrong in that it requires intercepting a delete with a move (unless we're deleting from the designated "recycle bin"). I appreciate the technical POV on the issue and glad at least it didn't take a while to find out what I need to do. I might just have to compile http://liquesce.codeplex.com/ from source to accomplish what I'd like. THey use CBFS and I assume I'd just intercept the Delete on specific conditions and handle accordingly. However I've used Liquece in the past and I don't think it's as stable as DrivePool. I had a few minor issues working with it in the past. As well as I'd lose a couple nice-to-have features that DrivePool offers. Either way I'm glad I have all this new knowledge! If it ever does get implemented I'm sure I'll latch onto it . Thanks