Viktor

Members-

Posts

56 -

Joined

-

Last visited

-

Days Won

5

Everything posted by Viktor

-

Well, looking at the high number of critical and important pending code change requests I expect, that it still might take a while, before that feature request 27686 (Add support for Google Team Drive) gets completed.

-

It seems that Google Team Drives cannot be used with CloudDrive yet (see here).

-

Don't mix up CloudDrive and DrivePool. CloudDrive provides harddrive-like images located in the cloud, while CloudPool is based on hidden directories on its pooled hard or cloud drives. Like a physical drive a cloud drive can be attached to only one computer at the same time. But you can move it from one to another computers (detach it here and attach it there).

-

Your screenshot shows that you are using an older CloudDrive version. Perhaps the latest version (1.1.0.1038) changes something.

-

Check the Autostart tab of the Task-Manager. It should have an activated entry “StableBit DrivePool Notifications”. If its status is deactivated, then you need to toggle it to activated. If the entry is completely missing, you should repair the DrivePool installation or reinstall. If the entry is there and active, but no process is running, then something is preventing it from running, most likely a virus protection software, or it’s crashing for some other reason. In that case you maybe find some details in the event logs or the virus protection logs.

-

I saw the same issue quite frequently in the past (not only Google Drive but OneDrive too). I had to press the Retry button several times, before the affected drives got finally mounted. But things improved with recent beta builds (currently using 1.1.0.1029 BETA). Maybe you give it a try.

-

Did you try it with these settings? You could also try to exclude the hidden PoolPart.xxx… folders on the drives the pool consists of from the virus protection. Or you take a more risky approach and deactivate the virus protection completely for a while.

-

Any reason, why you don't take a more current build? According to the change log is this the latest one, which passed the upgrade and functional tests: http://dl.covecube.com/CloudDriveWindows/beta/download/StableBit.CloudDrive_1.1.0.1025_x64_BETA.exe

-

I changed my Clouddrive Google Drive folder name and I broke everything

Viktor replied to swassglass's question in General

The root folder is called "StableBit CloudDrive". If you only renamed that folder, just give it that default name again. The entire (Google Drive) StableBit folder structure looks like this: \StableBit CloudDrive\CloudPart-xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx\xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx-CONTENT(-ATTACHMENT,-METADATA) The "xxxx..." string is a placeholder for the global unique id in hex code (e.g. abcdef01-abc1-def2-1234-12345abcd67f). All files inside the folders on Google Drive have that id in their name and you will find it also in the cache folder's name on your local machine (e.g. \CloudPart-abcdef01-abc1-def2-1234-12345abcd67f). -

It looks like that the “pinning issue” is caused by the prefetcher. I turned off prefetching on the Google drives and did not see any pinning data process since then. Furthermore, by disabling the prefetching I also got rid of frequent IO errors and timeout warnings (not one red error or yellow warning message for two days now). I’m using version 1.0.3.982 BETA.

-

But the question is: Why is it’s reducing the amount of pinned data and then running through the pinning process so frequently? It happens only with Google drives and freezes the computer several times a day - very annoying!

-

That’s exactly what I see too with Google drives – frequent “pinning data” phases with extreme CPU loads. Unclear, why pinning needs to be performed at all. Neither directories nor metadata are being changed on any drive. One drive is only downloading (being scanned for Crashplan backup). The pinned data volume suddenly gets reduced by a certain amount (e.g. 200 MB), what then triggers such a pinning phase and lets the entire system freeze almost completely for a couple of minutes – that happens multiple times a day.

-

To change the cache location, you need to detach the drive first and then attach it again. Specify the cache drive under Advanced Settings of the Attach dialog.

-

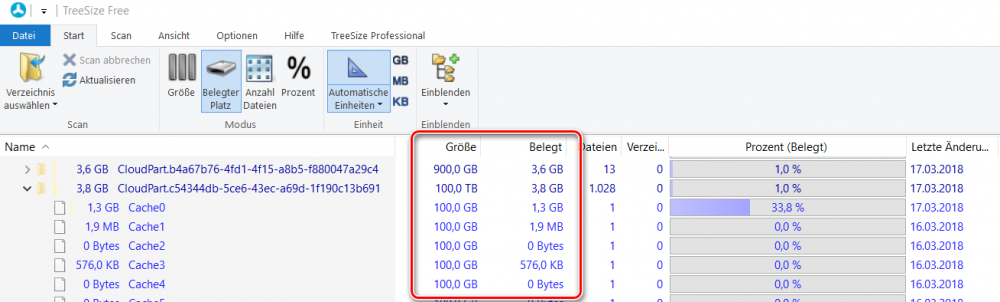

As far as I understand, is a CloudDrive cache folder a placeholder for the entire filesystem in the cloud. It contains the total number of chunk files, each showing the same "virtual" size (e.g. 100 GB), but only a few are partially filled with cached data (real size). Only the real sizes count for the total disc space usage (see the attached TreeSize example). I have cache folders with more than 100 TB total size on a 500 GB SSD and never ran out of space so far. I’m wondering, why TreeSize doesn’t give you a hint, where the space is actually taken. Did you run it as Administrator and check normally hidden places like the System Volume Information folder? Is the C drive free from errors? And can you exclude a malware effect?

-

If it’s not the cache folders, you should try to find out, which other folders and files are increasingly taking the disc space. I’d recommend using the freeware tool “TreeSize Free” to identify them. One place other than the cache, which CloudDrive occasionally writes temporary files into, I know about, is the folder %PROGRAMFILES%\StableBit\CloudDrive\Data\ReadModifyWriteRecovery. But most of the time this folder should be empty.

-

Constant "Name of drive" is not authorized with Google Drive

Viktor replied to irepressed's question in General

I installed the latest version 3 days ago and haven’t seen this notification anymore so far. -

Constant "Name of drive" is not authorized with Google Drive

Viktor replied to irepressed's question in General

Not sure, if everyone talks about the same issue. At least in my experience is this “not authorized” message (see attached) a false alarm and does not really impact the drive. Whenever I see it, the affected drive is still connected and accessible, but always has been inactive for a while (nothing to up- or download). As soon as the drive becomes active again (copy a file to it or just browse through a folder), the red message disappears automatically - no need to click on “Reauthorize…”. So – at least in my case – the drive does not really lose its authorization. In the first couple of occurrences I also clicked the “Reauthorize…” link to get rid of the message (superfluosly). During my investigations I also noticed new StableBit CloudDrive entries with increasing numbers being added to the third-party apps list in the Google Account, what might be the effect of reauthorizing an already validly authorized app. -

Which DP version have you installed? I have been struggling with that Windows Defender Update issue (during my attempts to fix it, I even went through a Windows 10 in-place upgrade), before I fortunately found this thread talking about a connection to Drive Pool and where Chris announced version 2.2.0.902 as a fix: I was happy that installing this version actually fixed it (meanwhile I’m using 2.2.0.904).

- 8 replies

-

- windows 10

- windows defender

-

(and 1 more)

Tagged with:

-

Constant "Name of drive" is not authorized with Google Drive

Viktor replied to irepressed's question in General

Could it be that this “not authorized” error message has to do with some idle timeout? I got this message for a drive that was idle for some hours (no up- or download). After copying a couple of files to it, it became active (started to send and receive data) and the (red) error message disappeared – without having it reauthorized. -

You can access the locally stored data through the hidden "PoolPart.xxx..." folder on your local pool. If you take the content of that folder as data source for the Arq backup instead of the final pooled pool, you could achieve what you want.

-

Just found 951 and can confirm that the issue is fixed. Thanks to Alex for the quick fix! Spared me to roll back .

-

I recently updated CD on one of my PCs to 1.0.2.950 Beta too and a quick test reproduced that issue! The same encrypted cloud drive cannot be attached on that machine with the right key, while it’s decrypted and attached without problems on another PC with CD 1.0.2.929 Beta.

-

I think, it should be possible to identify the drive, which the key in the PDF belongs to, by comparing the creation date written into the key PDF file with the “CreatedTimeUtc†entry in the corresponding METADATA file on the cloud storage. These two timestamps should be very close (after having the PDF date converted to UTC time zone) with just a few seconds difference (because the key PDF is created a bit before the actual drive creation is launched).

-

Windows Disk Management (diskmgmt.msc) should let you assign a drive letter (or mount path) to your cloud drive.

-

Troubleshooting data submitted.

.thumb.png.3f57d1f463af1e9b33c52a2c6c1f58f3.png)